- Explore MCP Servers

- Deep_search_lightning

Deep Search Lightning

What is Deep Search Lightning

Deep_search_lightning is a lightweight, pure web search solution designed for large language models. It supports multi-engine aggregated search, deep reflection, and result evaluation, offering a balanced approach between web search and deep research with a framework-free implementation for easy developer integration.

Use cases

Use cases include enhancing search functionalities in chatbots, improving information retrieval systems, and supporting academic research by providing high-quality search results and model evaluations.

How to use

To use Deep_search_lightning, developers can install it via conda, set up the environment, and integrate it into their applications. The solution supports various search engines and allows customization of search depth and reflection strategies.

Key features

Key features include multi-engine aggregated search (supporting Baidu, DuckDuckGo, Bocha, and Tavily), reflection strategies for model evaluation, custom pipelines for all LLM models, OpenAI-style API compatibility, and built-in MCP server support.

Where to use

Deep_search_lightning can be used in various fields such as AI research, natural language processing, and any application requiring efficient web search capabilities integrated with large language models.

Clients Supporting MCP

The following are the main client software that supports the Model Context Protocol. Click the link to visit the official website for more information.

Overview

What is Deep Search Lightning

Deep_search_lightning is a lightweight, pure web search solution designed for large language models. It supports multi-engine aggregated search, deep reflection, and result evaluation, offering a balanced approach between web search and deep research with a framework-free implementation for easy developer integration.

Use cases

Use cases include enhancing search functionalities in chatbots, improving information retrieval systems, and supporting academic research by providing high-quality search results and model evaluations.

How to use

To use Deep_search_lightning, developers can install it via conda, set up the environment, and integrate it into their applications. The solution supports various search engines and allows customization of search depth and reflection strategies.

Key features

Key features include multi-engine aggregated search (supporting Baidu, DuckDuckGo, Bocha, and Tavily), reflection strategies for model evaluation, custom pipelines for all LLM models, OpenAI-style API compatibility, and built-in MCP server support.

Where to use

Deep_search_lightning can be used in various fields such as AI research, natural language processing, and any application requiring efficient web search capabilities integrated with large language models.

Clients Supporting MCP

The following are the main client software that supports the Model Context Protocol. Click the link to visit the official website for more information.

Content

Deep Search Lightning

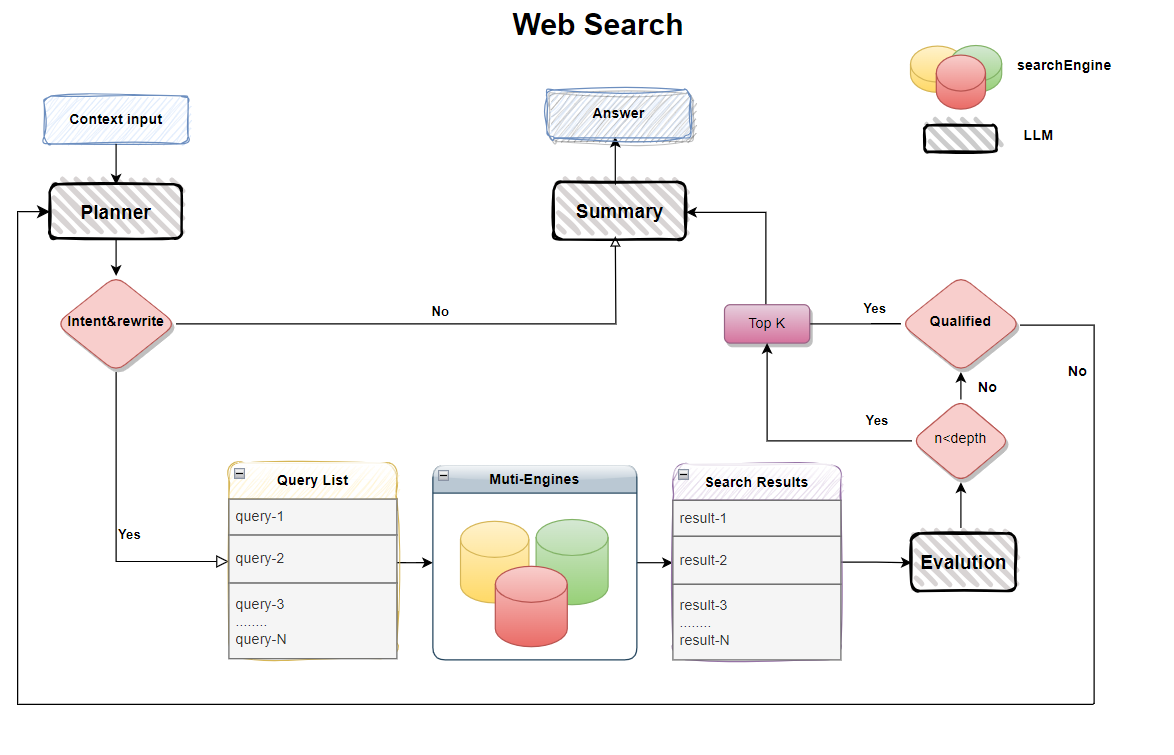

A lightweight, pure web search solution for large language models, supporting multi-engine aggregated search, deep reflection and result evaluation. A balanced approach between web search and deep research, providing a framework-free implementation for easy developer integration.

✨ Why deepsearch_lightning?

Web search is a common feature for large language models, but traditional solutions have limitations:

- Limited search result quality and reflection effectiveness

- Requires powerful models and paid search engines

- Small models often struggle with tool calling patterns

- Contextual understanding can be unstable across different model sizes

Deep Search Lighting provides:

- Framework-free implementation with no restrictions

- Works with free APIs while maintaining good query quality

- Adjustable depth parameters to balance speed and results

- Reflection mechanism for model self-evaluation

- Supports models of any size, including smaller ones

[Experimental Planning]:

- Simplified design without web parsing or text chunking

- Considering adding RL-trained small recall models

✨ Features

-

Multi-engine aggregated search:

- ✅ Baidu (free)

- ✅ DuckDuckGo (free but requires VPN)

- ✅ Bocha (requires API key)

- ✅ Tavily (requires registration key)

-

Reflection strategies and controllable evaluation

-

Custom pipelines for all LLM models

-

OpenAI-style API compatibility

-

Pure model source code for easy integration

-

Built-in MCP server support

📺 DEMO

🔄 Piepline

🚀 Quick Start

1. Installation

conda create -n deepsearch_lightning python==3.11

conda activate deepsearch_lightning

pip install -r requirements.txt

# Optional: For langchain support

pip install -r requirements_langchain.txt

🔧Configuration

- Rename .env.examples to .env

- Fill in your model information (currently supports OpenAI-style APIs)

- Baidu search is enabled by default - configure other engines as needed

🚀 RUN

1. test case

python test_demo.py

2. streamlit demo

streamlit run streamlit_app.py

3. run mcp server

python mcp_server.py

python langgraph_mcp_client.py

Planning

🧪 RL-trained small recall QA model validation

🧪 Strategy improvements

🧪 Multi-agent framework implementation

🙌 Welcome to contribute your ideas! Participate in the project via [Issues] or [Pull Requests].

License

This repository is licensed under the Apache-2.0 License.

Dev Tools Supporting MCP

The following are the main code editors that support the Model Context Protocol. Click the link to visit the official website for more information.