- Explore MCP Servers

- Local-AI-with-Ollama-Open-WebUI-MCP-on-Windows

Local Ai With Ollama Open Webui Mcp On Windows

What is Local Ai With Ollama Open Webui Mcp On Windows

Local-AI-with-Ollama-Open-WebUI-MCP-on-Windows is a self-hosted AI stack that combines Ollama for running language models, Open WebUI for user-friendly chat interaction, and MCP for centralized model management, providing full control, privacy, and flexibility without relying on the cloud.

Use cases

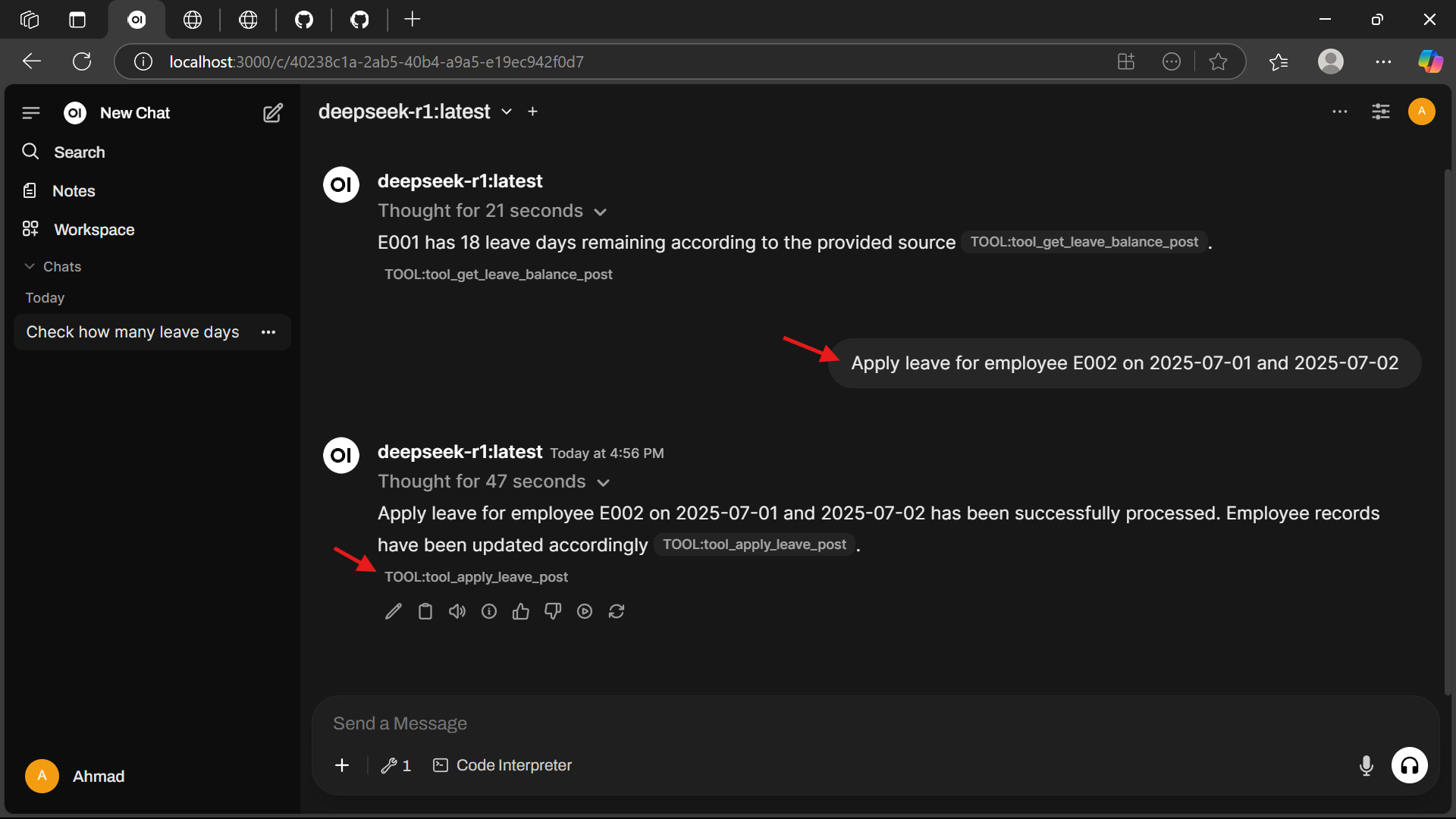

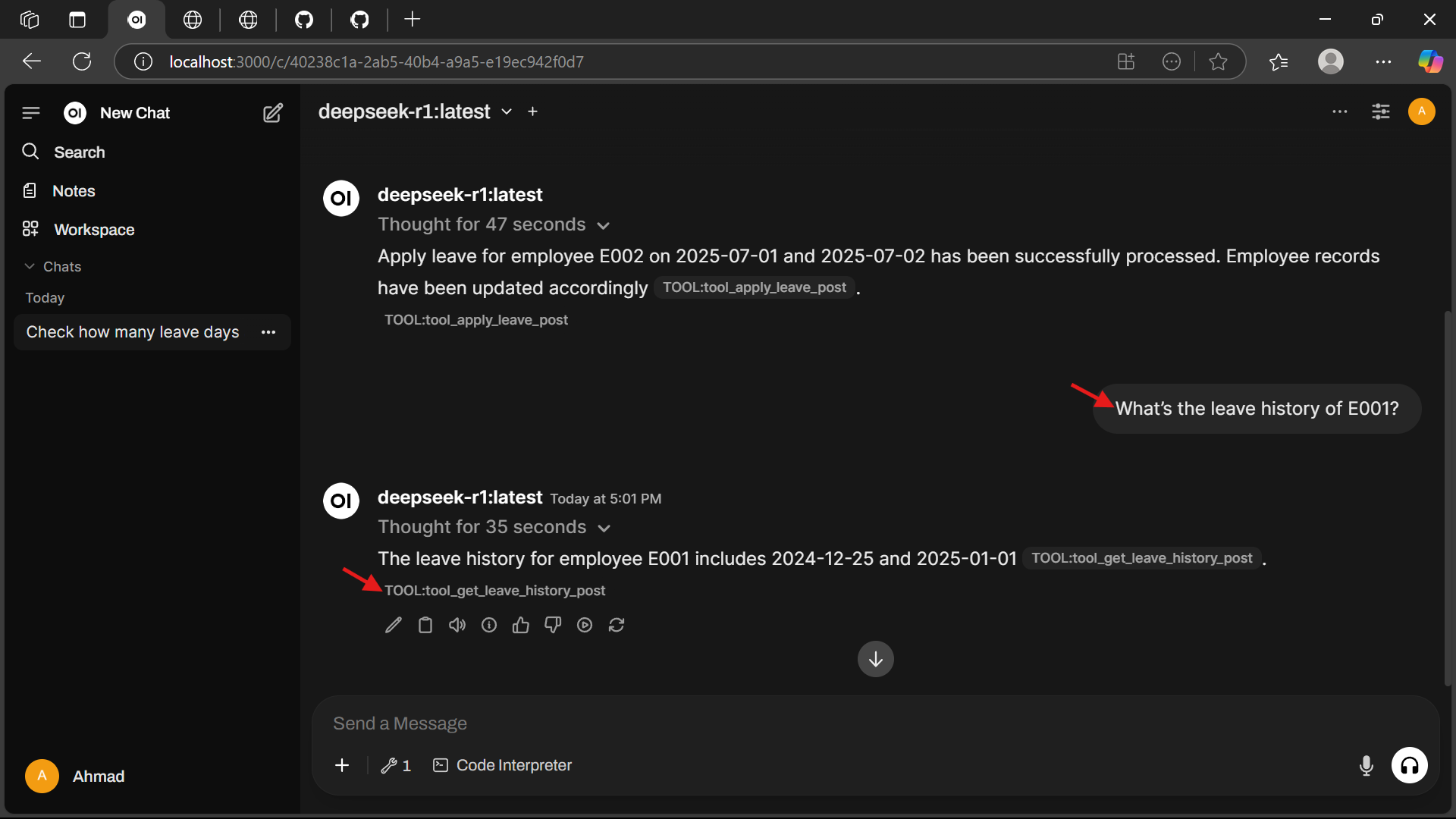

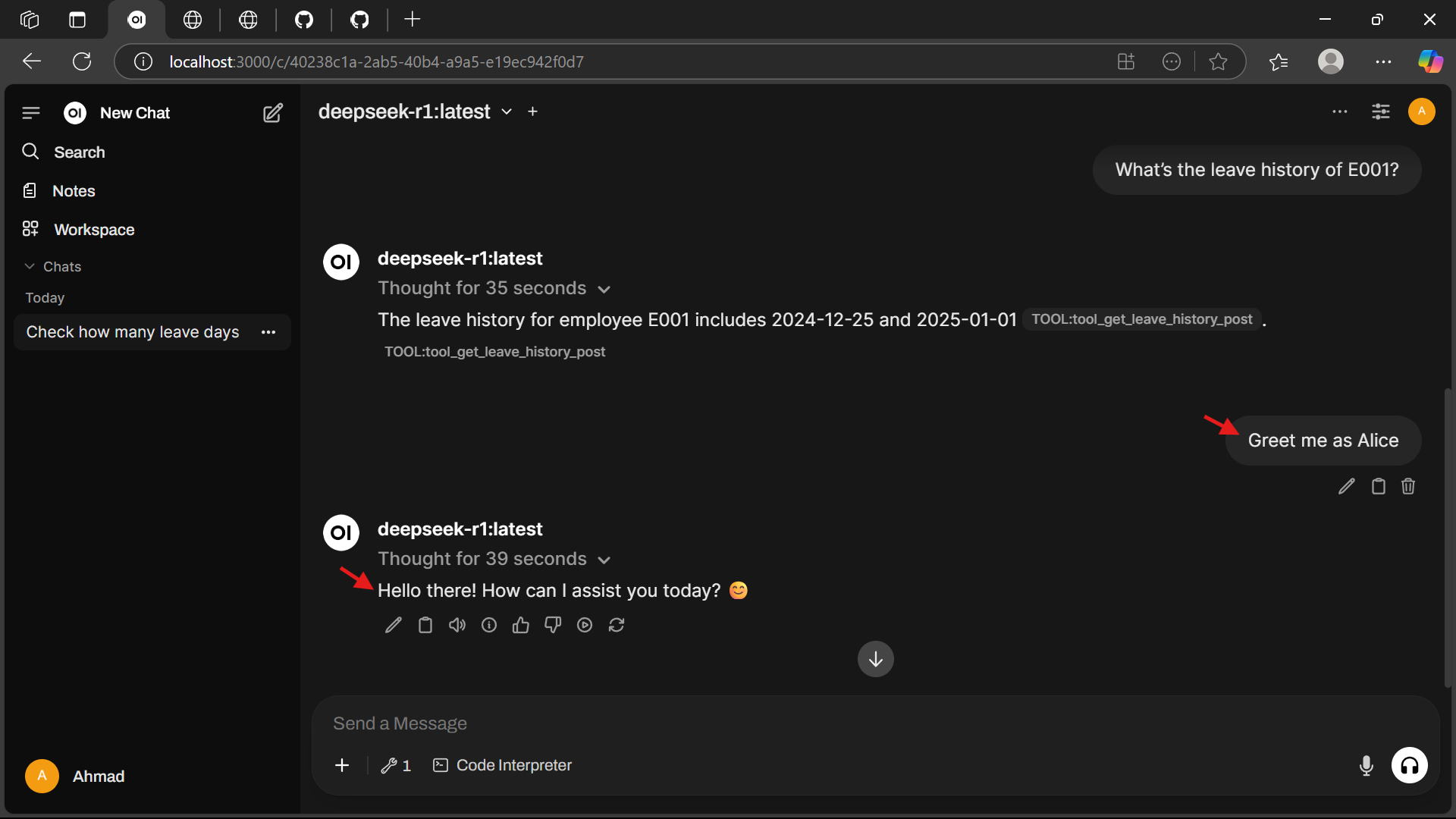

Use cases include managing employee leave requests, tracking leave history, and providing personalized greetings to employees.

How to use

To use Local-AI-with-Ollama-Open-WebUI-MCP-on-Windows, install Ollama on Windows, pull the ‘deepseek-r1’ model, clone the repository, navigate to the project directory, and run the ‘docker-compose.yml’ file to launch the services.

Key features

Key features include checking employee leave balance, applying for leave on specific dates, viewing leave history, and personalized greeting functionality.

Where to use

This tool can be used in various organizational settings, particularly in HR departments for managing employee leave applications and balances.

Clients Supporting MCP

The following are the main client software that supports the Model Context Protocol. Click the link to visit the official website for more information.

Overview

What is Local Ai With Ollama Open Webui Mcp On Windows

Local-AI-with-Ollama-Open-WebUI-MCP-on-Windows is a self-hosted AI stack that combines Ollama for running language models, Open WebUI for user-friendly chat interaction, and MCP for centralized model management, providing full control, privacy, and flexibility without relying on the cloud.

Use cases

Use cases include managing employee leave requests, tracking leave history, and providing personalized greetings to employees.

How to use

To use Local-AI-with-Ollama-Open-WebUI-MCP-on-Windows, install Ollama on Windows, pull the ‘deepseek-r1’ model, clone the repository, navigate to the project directory, and run the ‘docker-compose.yml’ file to launch the services.

Key features

Key features include checking employee leave balance, applying for leave on specific dates, viewing leave history, and personalized greeting functionality.

Where to use

This tool can be used in various organizational settings, particularly in HR departments for managing employee leave applications and balances.

Clients Supporting MCP

The following are the main client software that supports the Model Context Protocol. Click the link to visit the official website for more information.

Content

Local AI with Ollama, WebUI & MCP on Windows

A self-hosted AI stack combining Ollama for running language models, Open WebUI for user-friendly chat interaction, and MCP for centralized model management—offering full control, privacy, and flexibility without relying on the cloud.

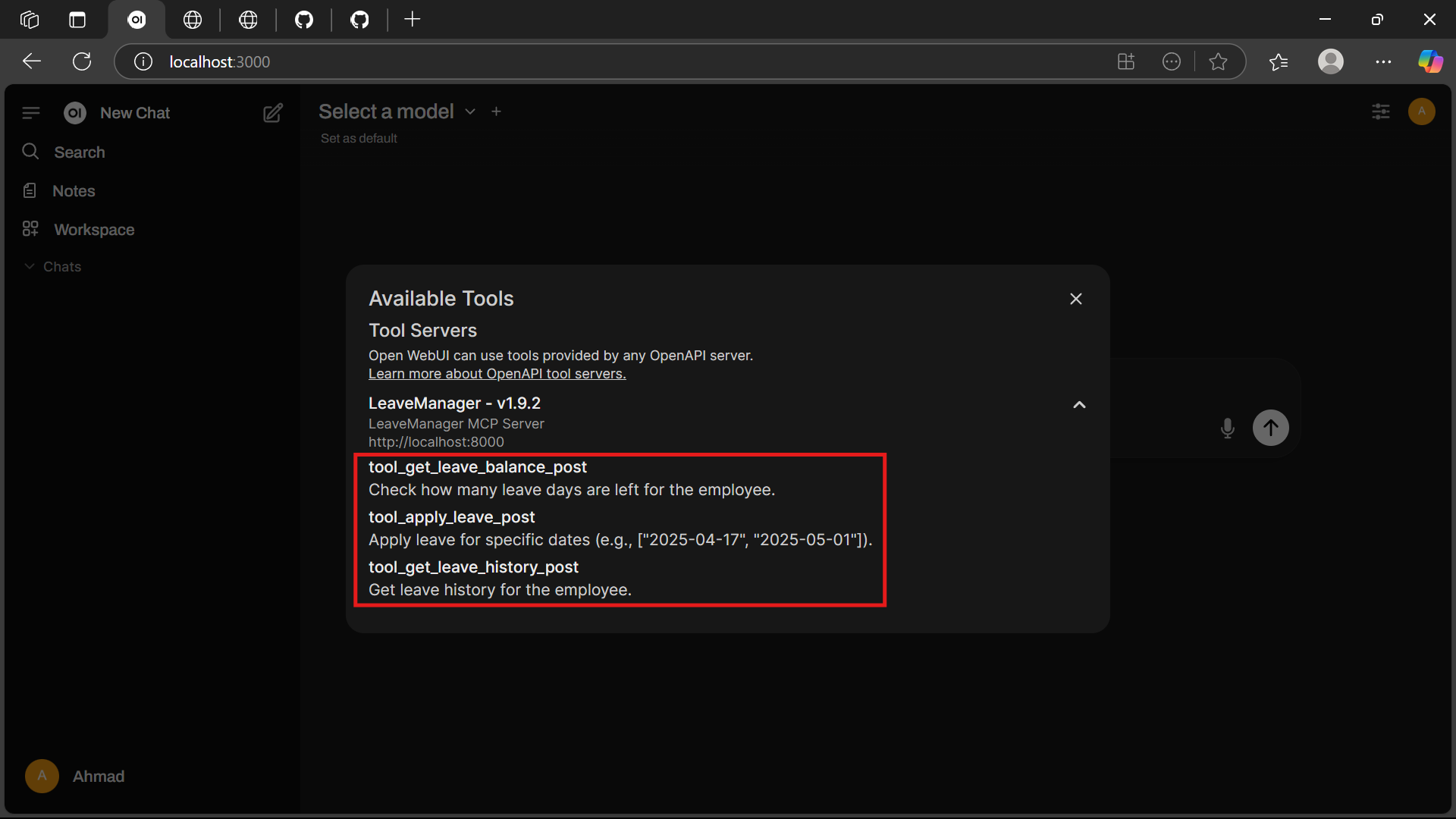

This sample project provides an MCP-based tool server for managing employee leave balance, applications, and history. It is exposed via OpenAPI using mcpo for easy integration with Open WebUI or other OpenAPI-compatible clients.

🚀 Features

- ✅ Check employee leave balance

- 📆 Apply for leave on specific dates

- 📜 View leave history

- 🙋 Personalized greeting functionality

📁 Project Structure

leave-manager/ ├── main.py # MCP server logic for leave management ├── requirements.txt # Python dependencies for the MCP server ├── Dockerfile # Docker image configuration for the leave manager ├── docker-compose.yml # Docker Compose file to run leave manager and Open WebUI └── README.md # Project documentation (this file)

📋 Prerequisites

- Windows 10 or later (required for Ollama)

- Docker Desktop for Windows (required for Open WebUI and MCP)

- Install from: Docker Desktop for Windows

🛠️ Workflow

- Install Ollama on Windows

- Pull the

deepseek-r1model - Clone the repository and navigate to the project directory

- Run the

docker-compose.ymlfile to launch services

Install Ollama

➤ Windows

-

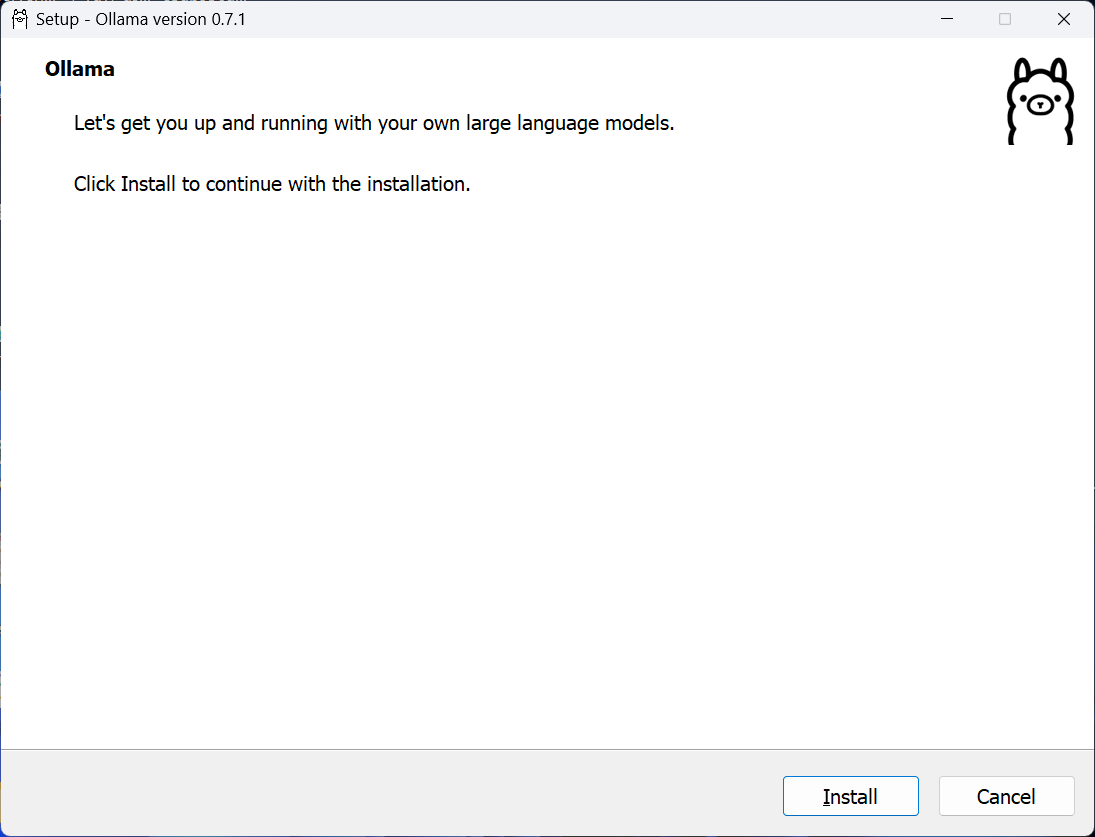

Download the Installer:

- Visit Ollama Download and click Download for Windows to get

OllamaSetup.exe. - Alternatively, download from Ollama GitHub Releases.

- Visit Ollama Download and click Download for Windows to get

-

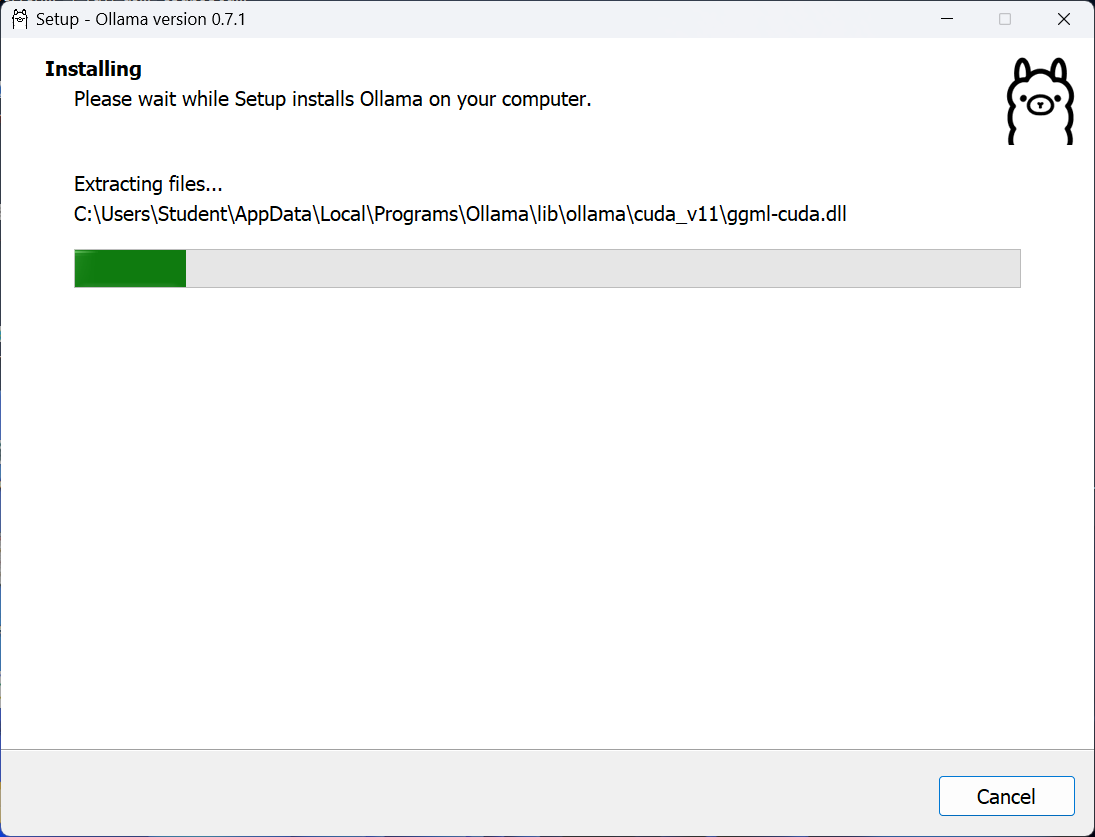

Run the Installer:

- Execute

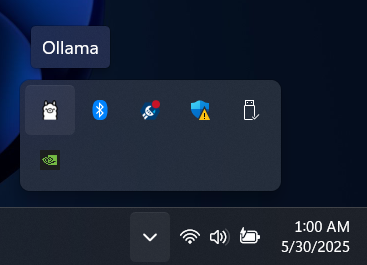

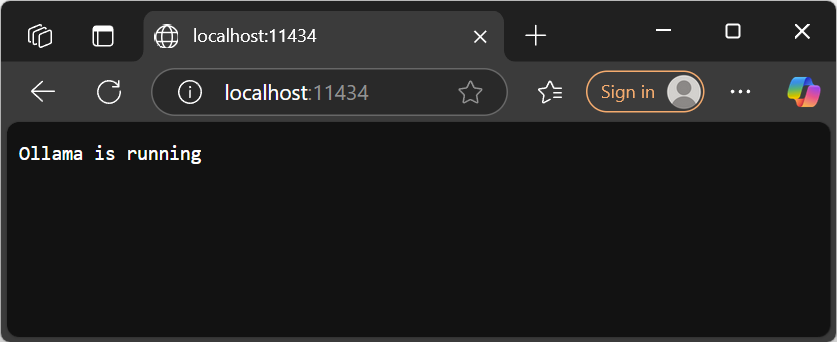

OllamaSetup.exeand follow the installation prompts. - After installation, Ollama runs as a background service, accessible at: http://localhost:11434.

- Verify in your browser; you should see:

Ollama is running

- Execute

-

Start Ollama Server (if not already running):

ollama serve- Access the server at: http://localhost:11434.

Verify Installation

Check the installed version of Ollama:

ollama --version

Expected Output:

ollama version 0.7.1

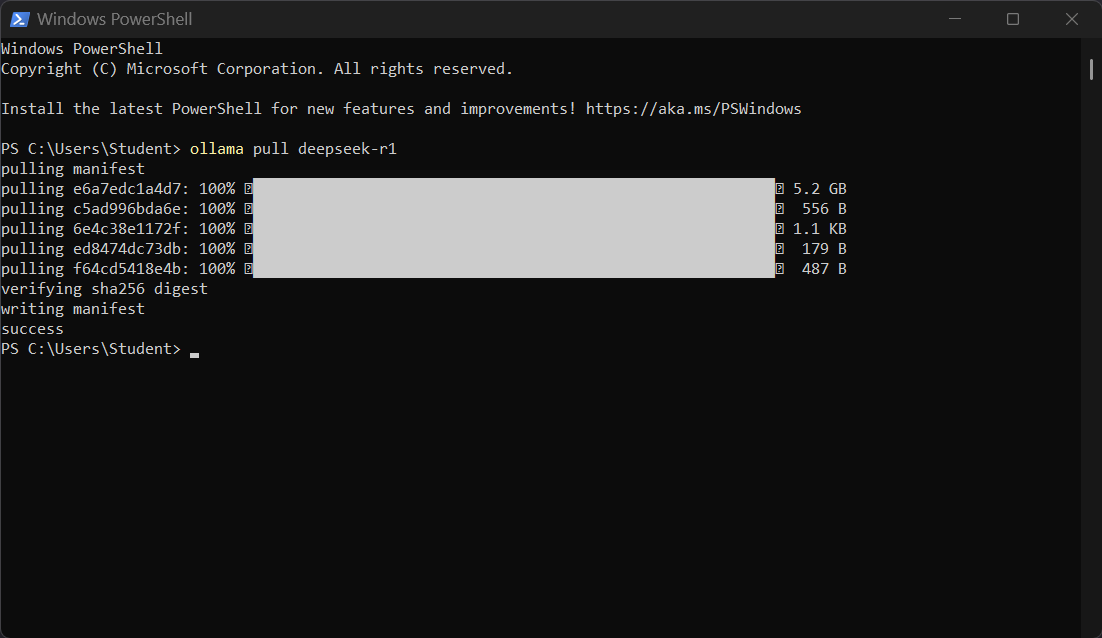

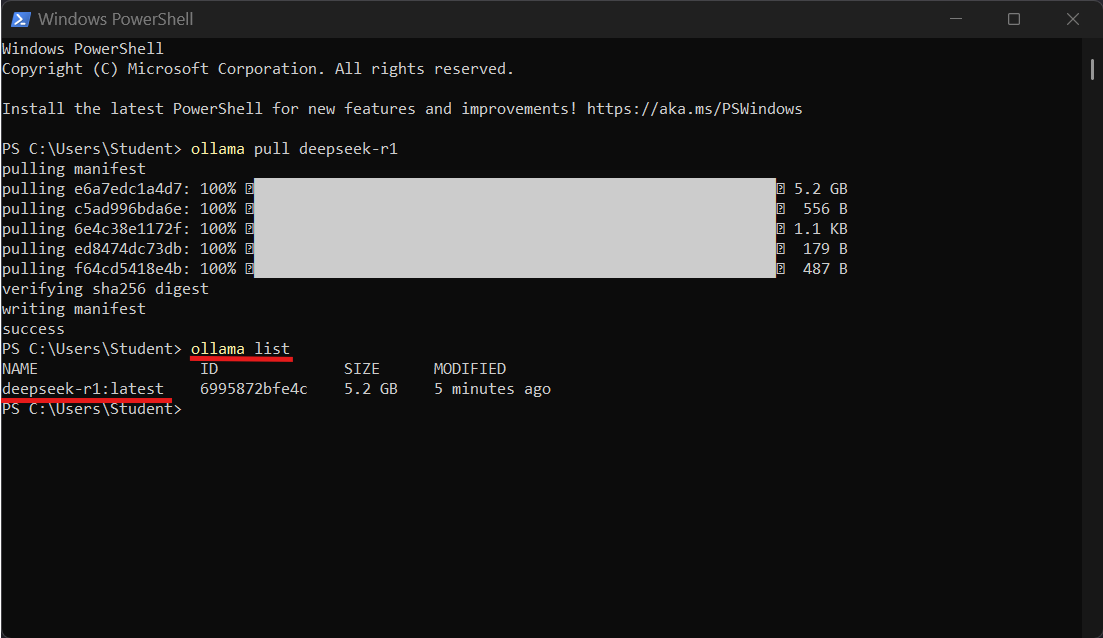

Pull the deepseek-r1 Model

1. Pull the Default Model (7B):

Using PoweShell

ollama pull deepseek-r1

To Pull Specific Versions:

ollama run deepseek-r1:1.5b

ollama run deepseek-r1:671b

2. List Installed Models:

ollama list

Expected:

Expected Output:

NAME ID SIZE deepseek-r1:latest xxxxxxxxxxxx X.X GB

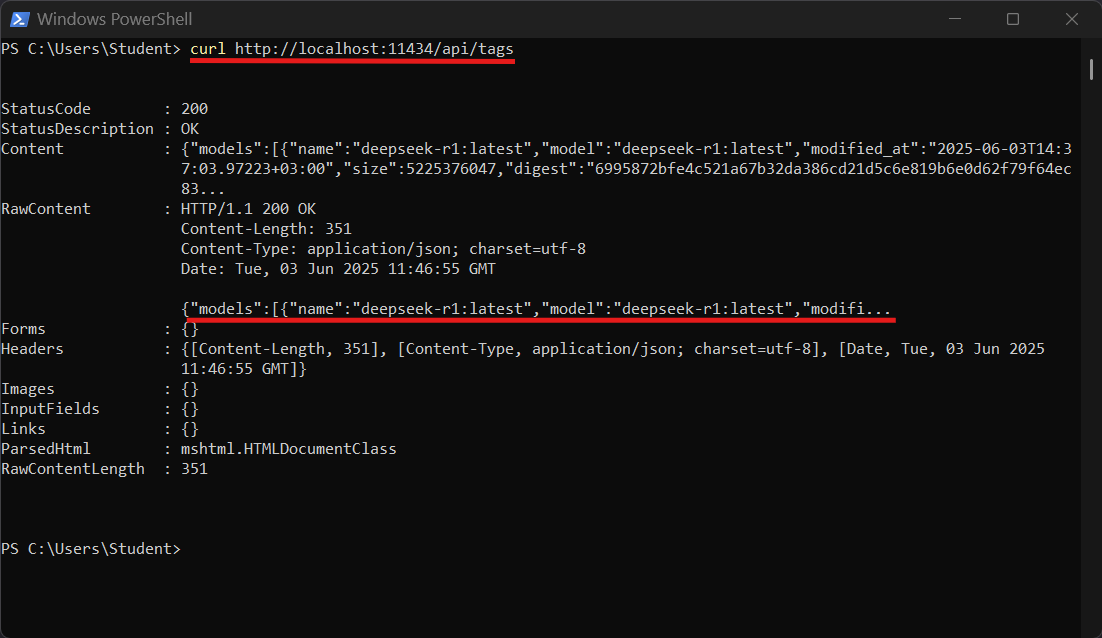

4. Alternative Check via API:

curl http://localhost:11434/api/tags

Expected Output:

A JSON response listing installed models, including deepseek-r1:latest.

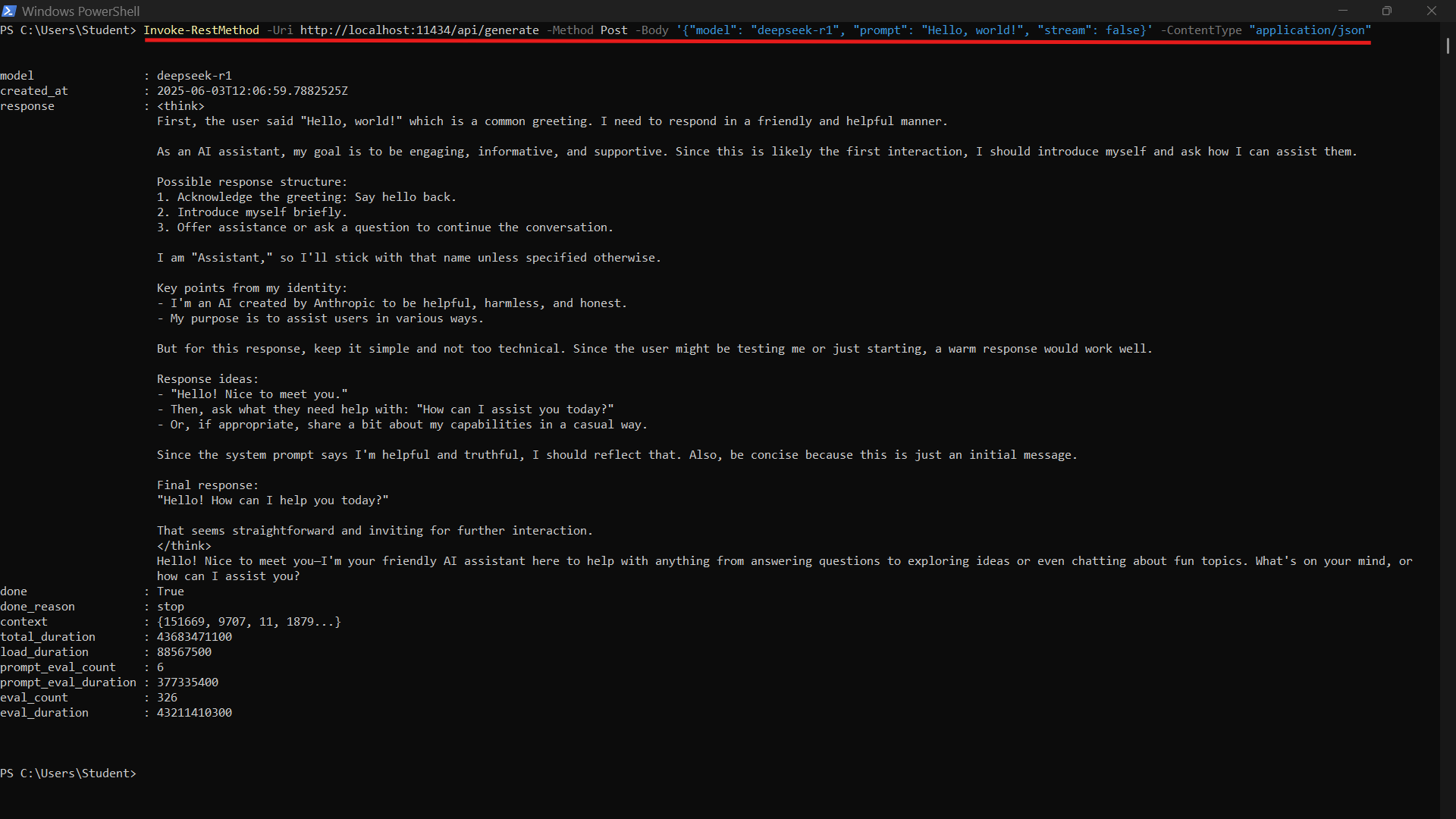

4. Test the API via PowerShell:

Invoke-RestMethod -Uri http://localhost:11434/api/generate -Method Post -Body '{"model": "deepseek-r1", "prompt": "Hello, world!", "stream": false}' -ContentType "application/json"

Expected Response:

A JSON object containing the model’s response to the “Hello, world!” prompt.

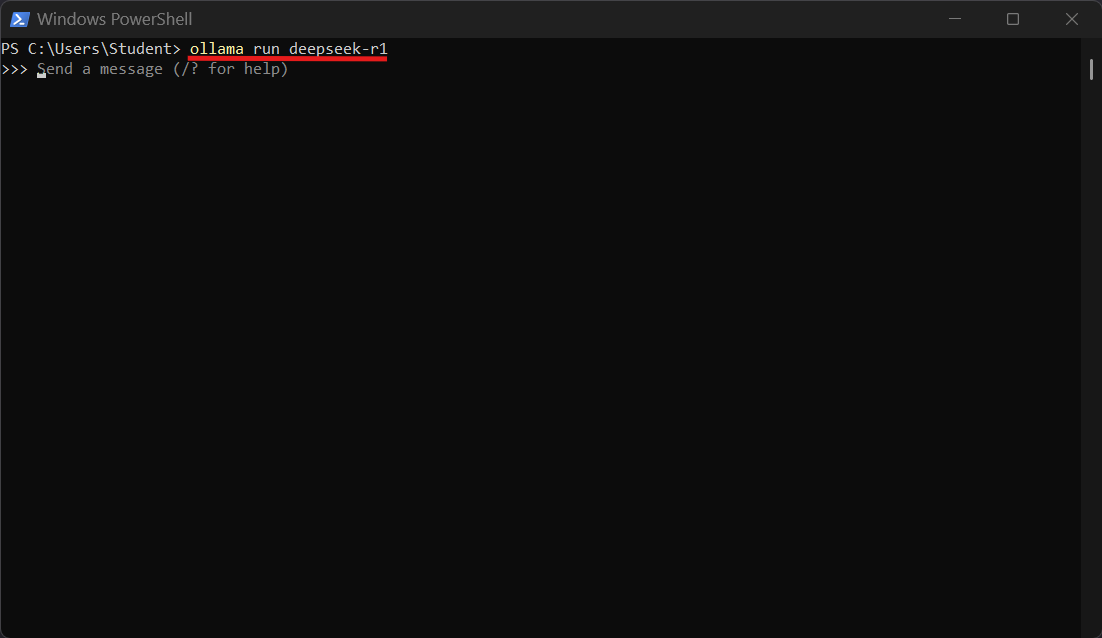

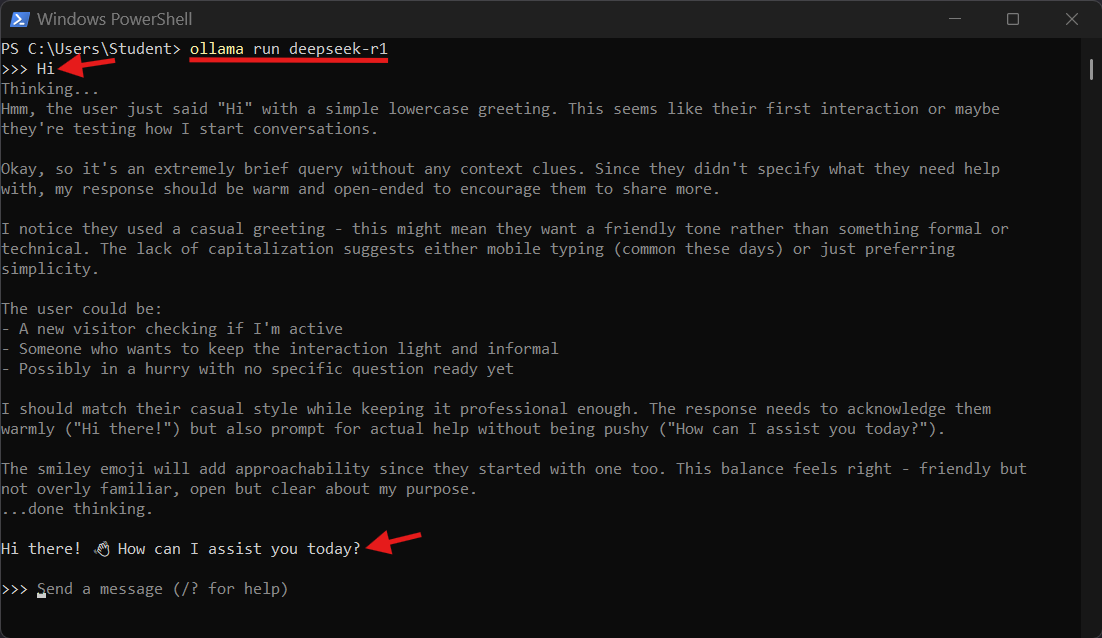

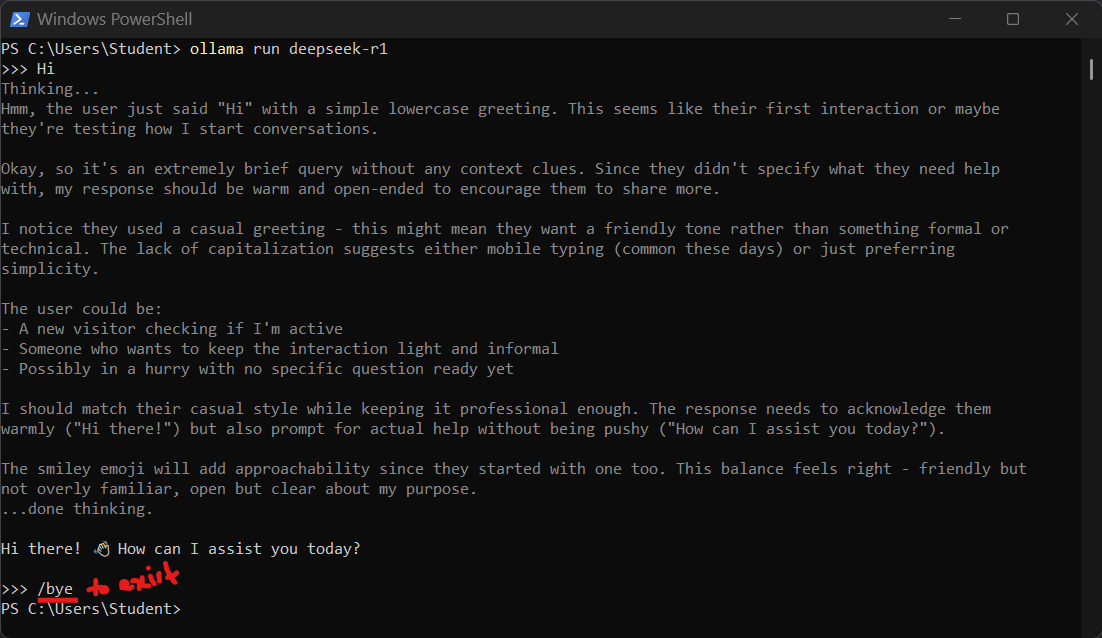

5. Run and Chat the Model via PowerShell:

ollama run deepseek-r1

- This opens an interactive chat session with the

deepseek-r1model. - Type

/byeand pressEnterto exit the chat session.

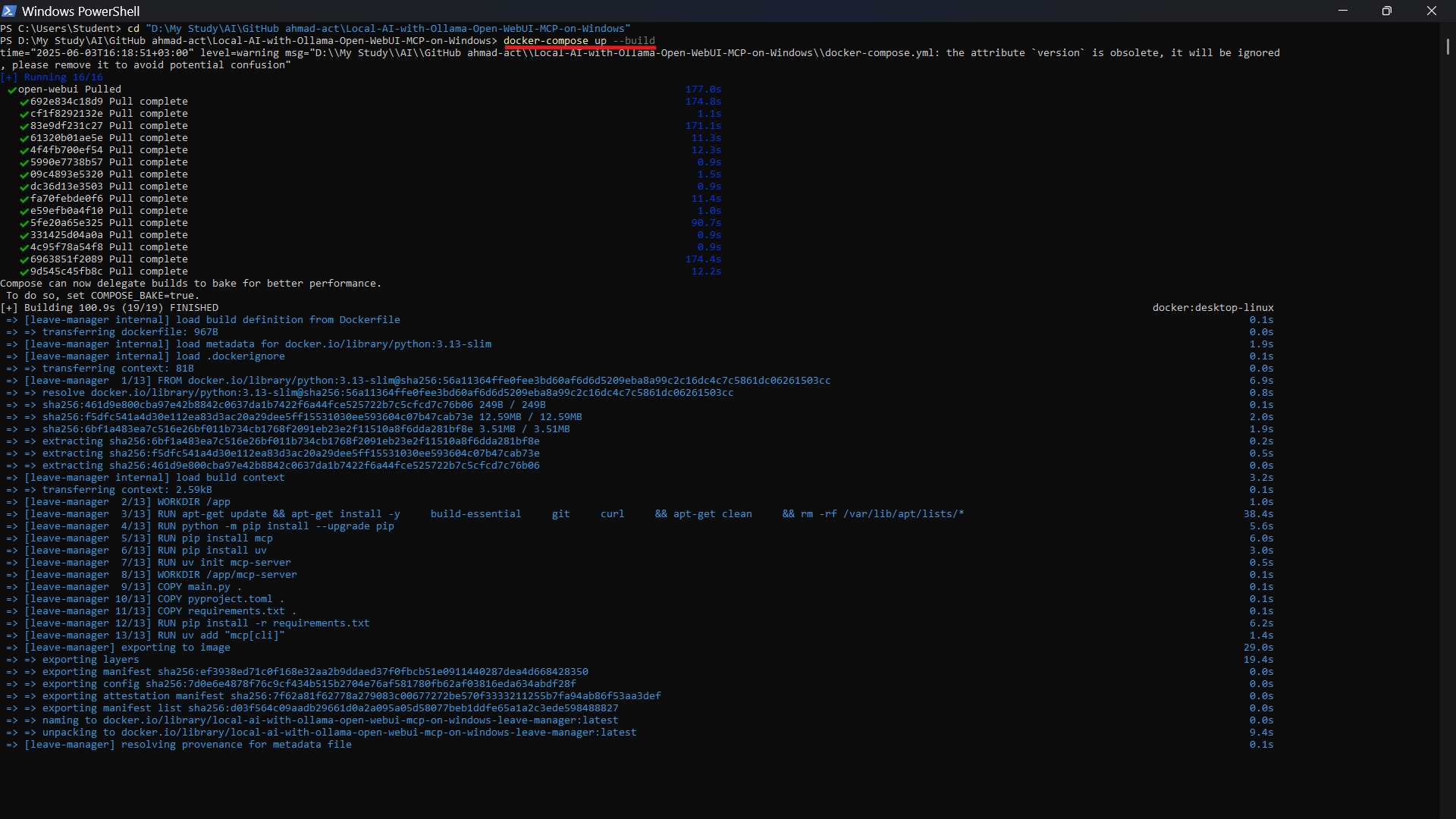

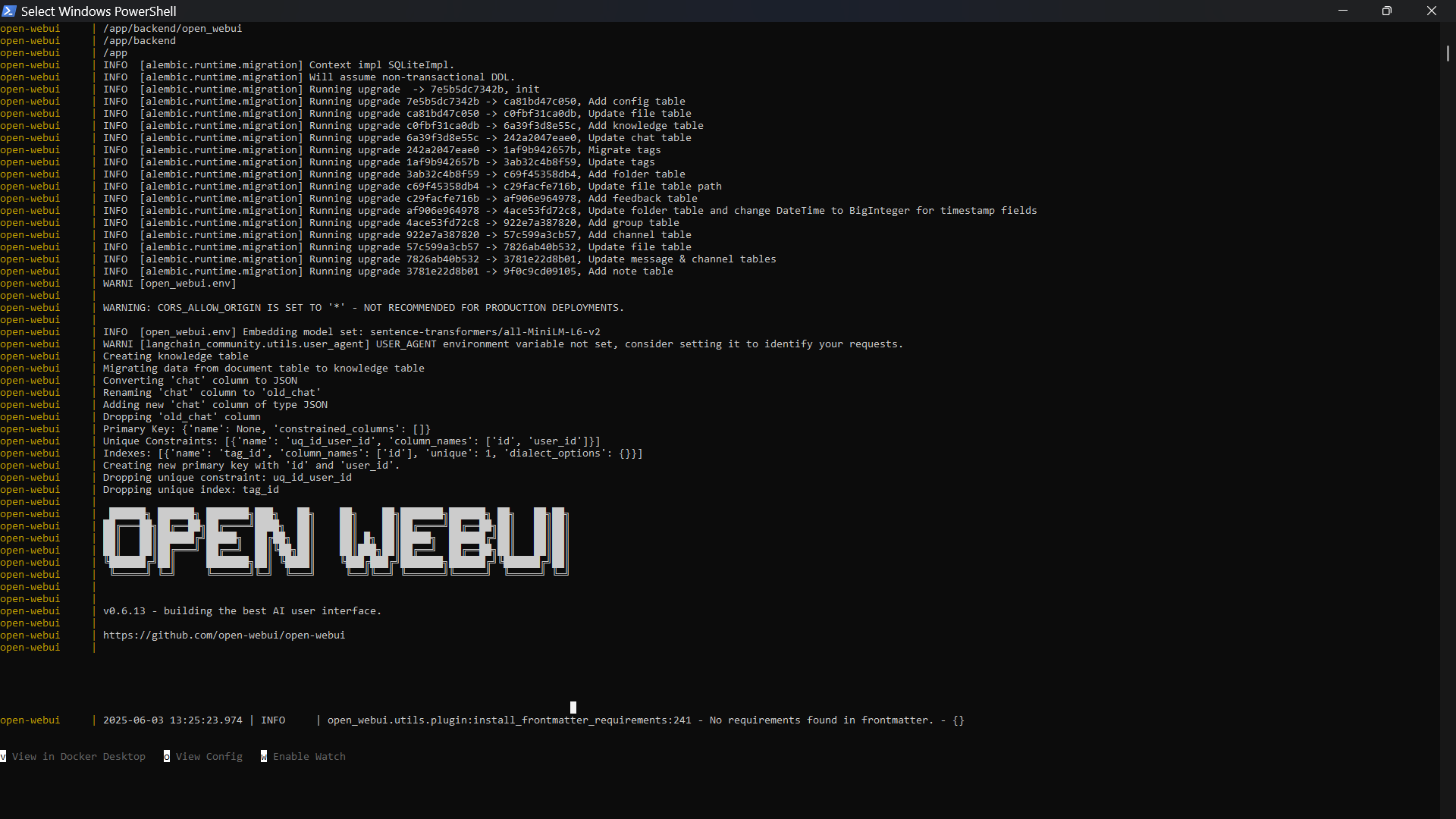

🐳 Run Open WebUI and MCP Server with Docker Compose

-

Clone the Repository:

git clone https://github.com/ahmad-act/Local-AI-with-Ollama-Open-WebUI-MCP-on-Windows.git cd Local-AI-with-Ollama-Open-WebUI-MCP-on-Windows -

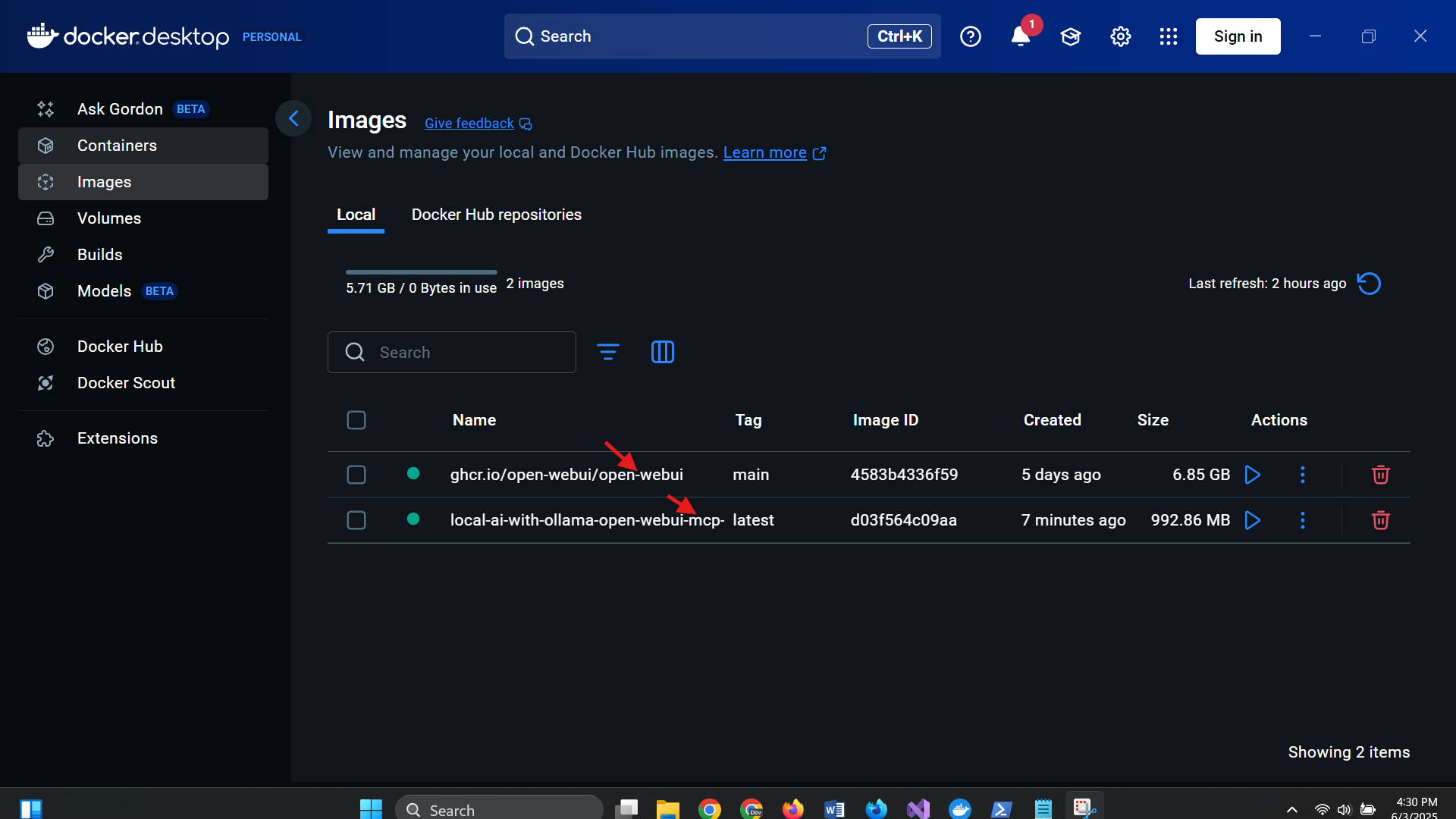

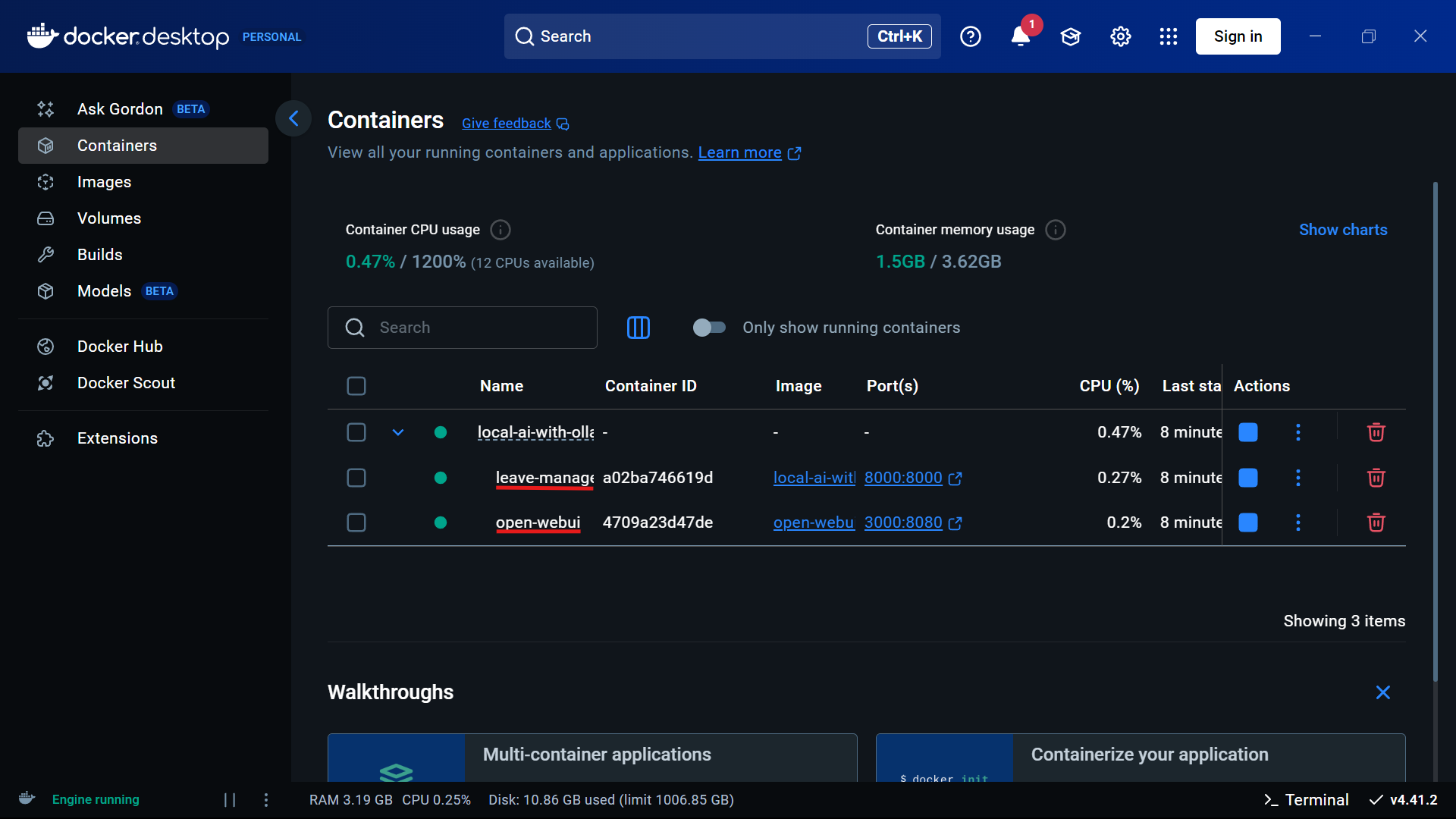

To launch both the MCP tool and Open WebUI locally (on Docker Desktop):

docker-compose up --build

This will:

- Start the Leave Manager (MCP Server) tool on port

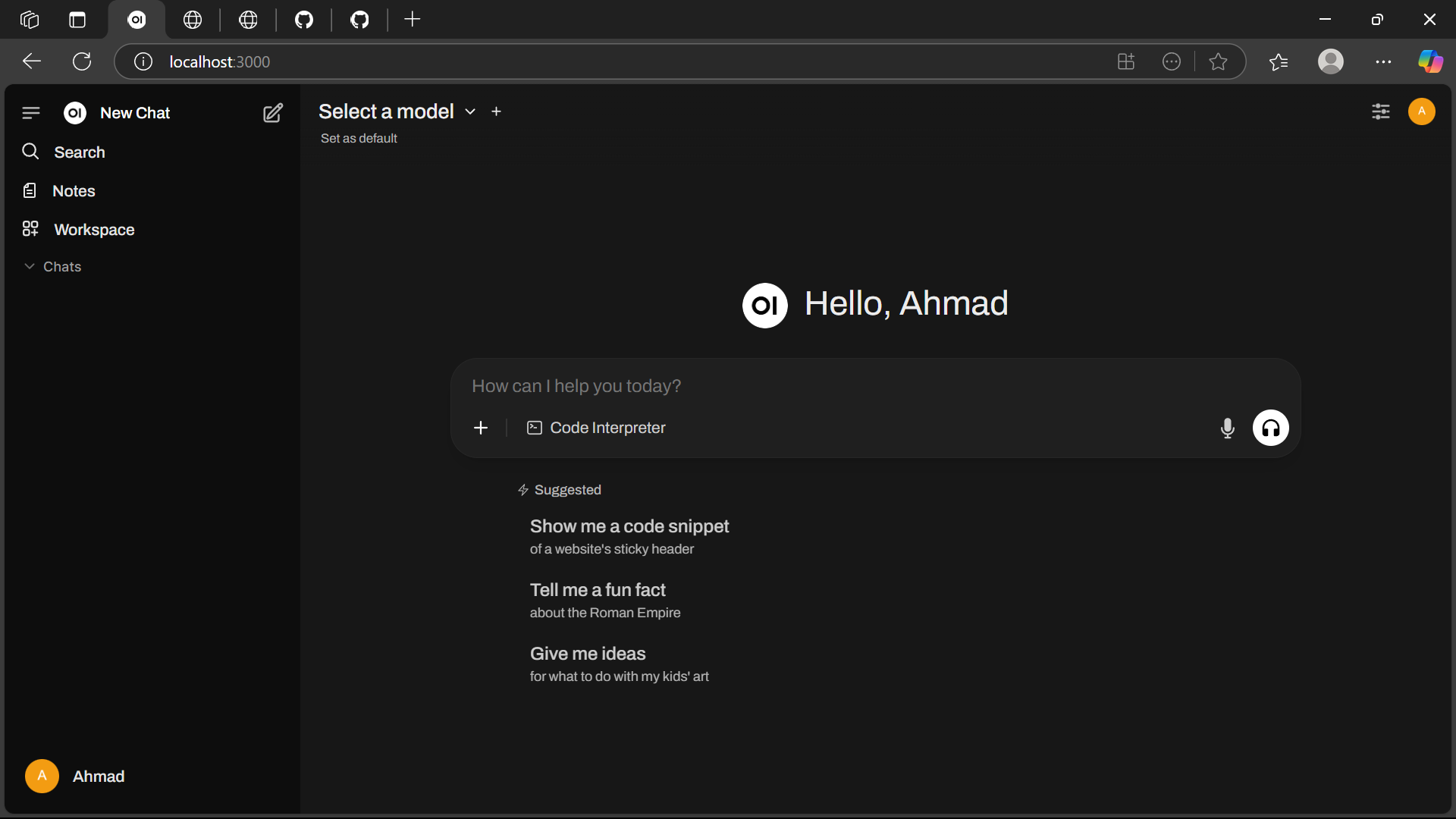

8000 - Launch Open WebUI at http://localhost:3000

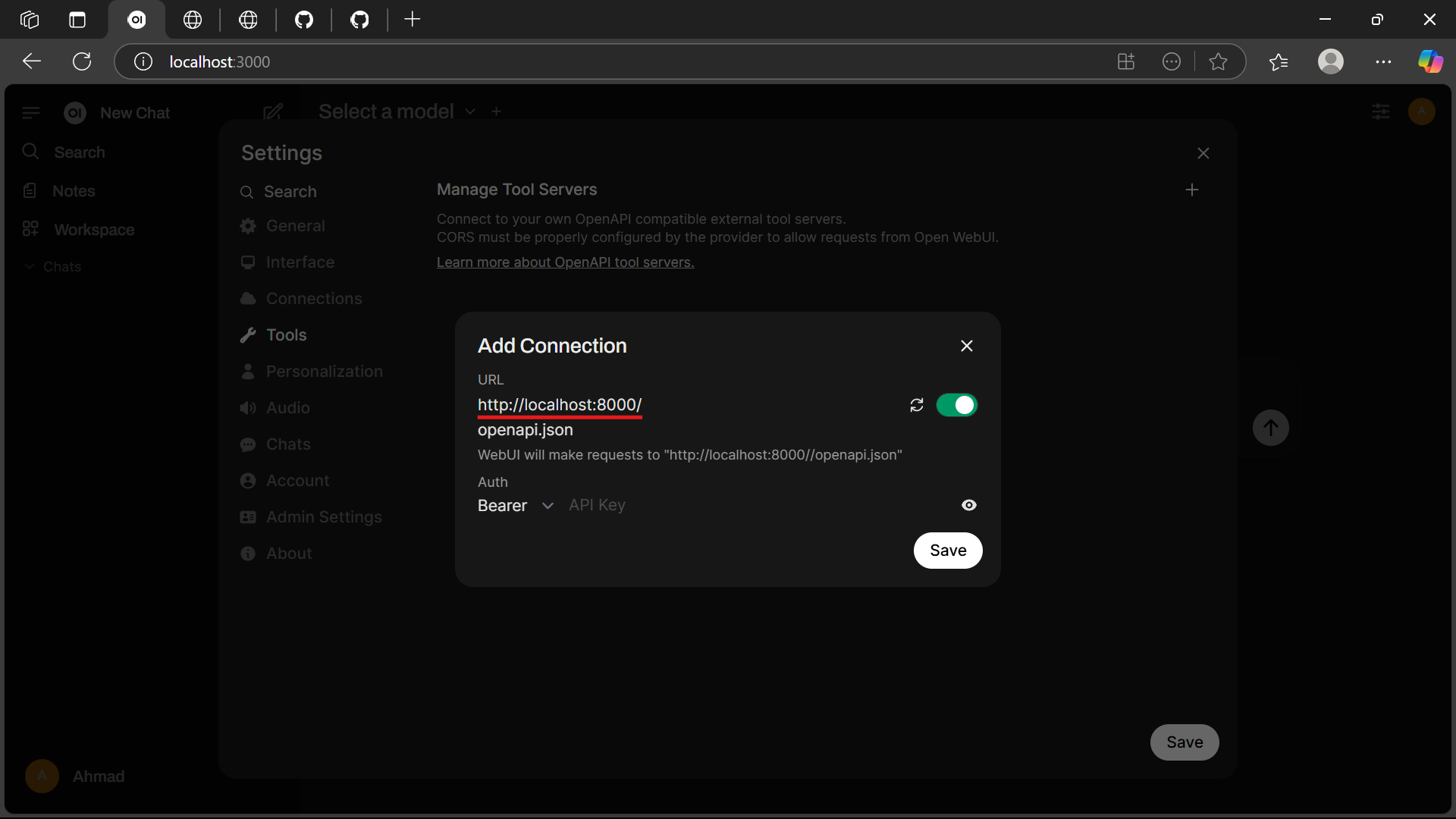

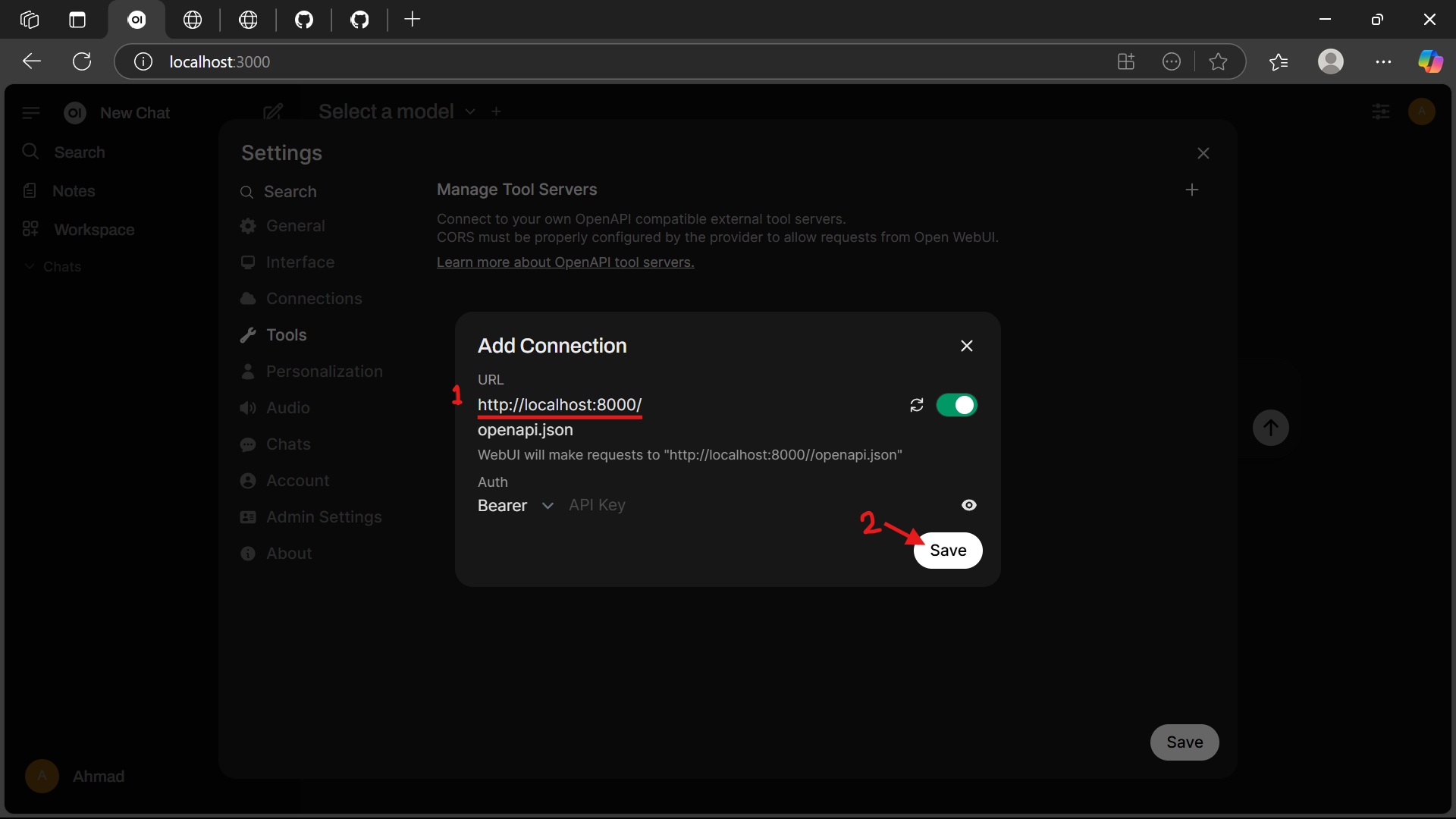

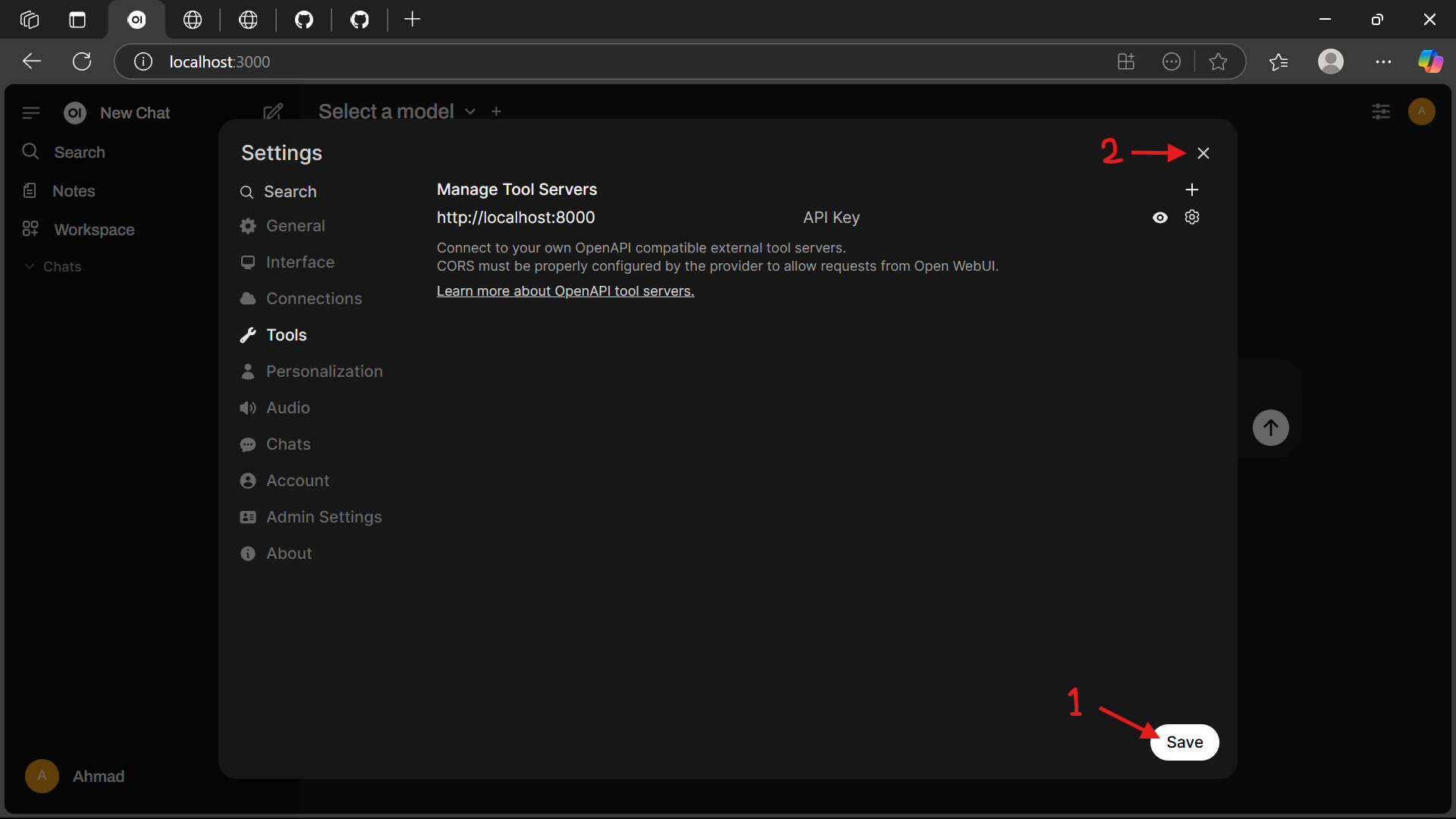

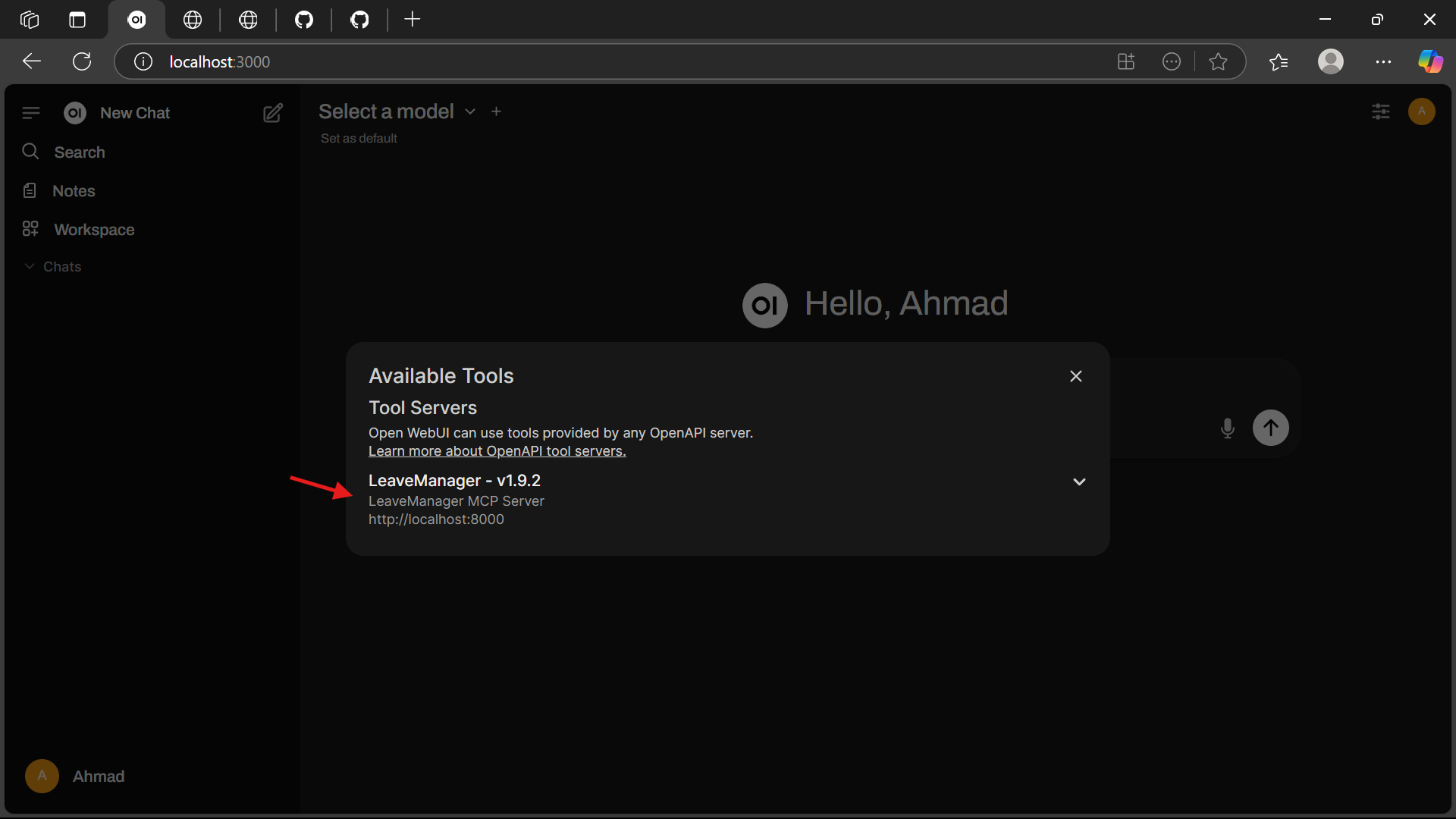

🌐 Add MCP Tools to Open WebUI

The MCP tools are exposed via the OpenAPI specification at: http://localhost:8000/openapi.json.

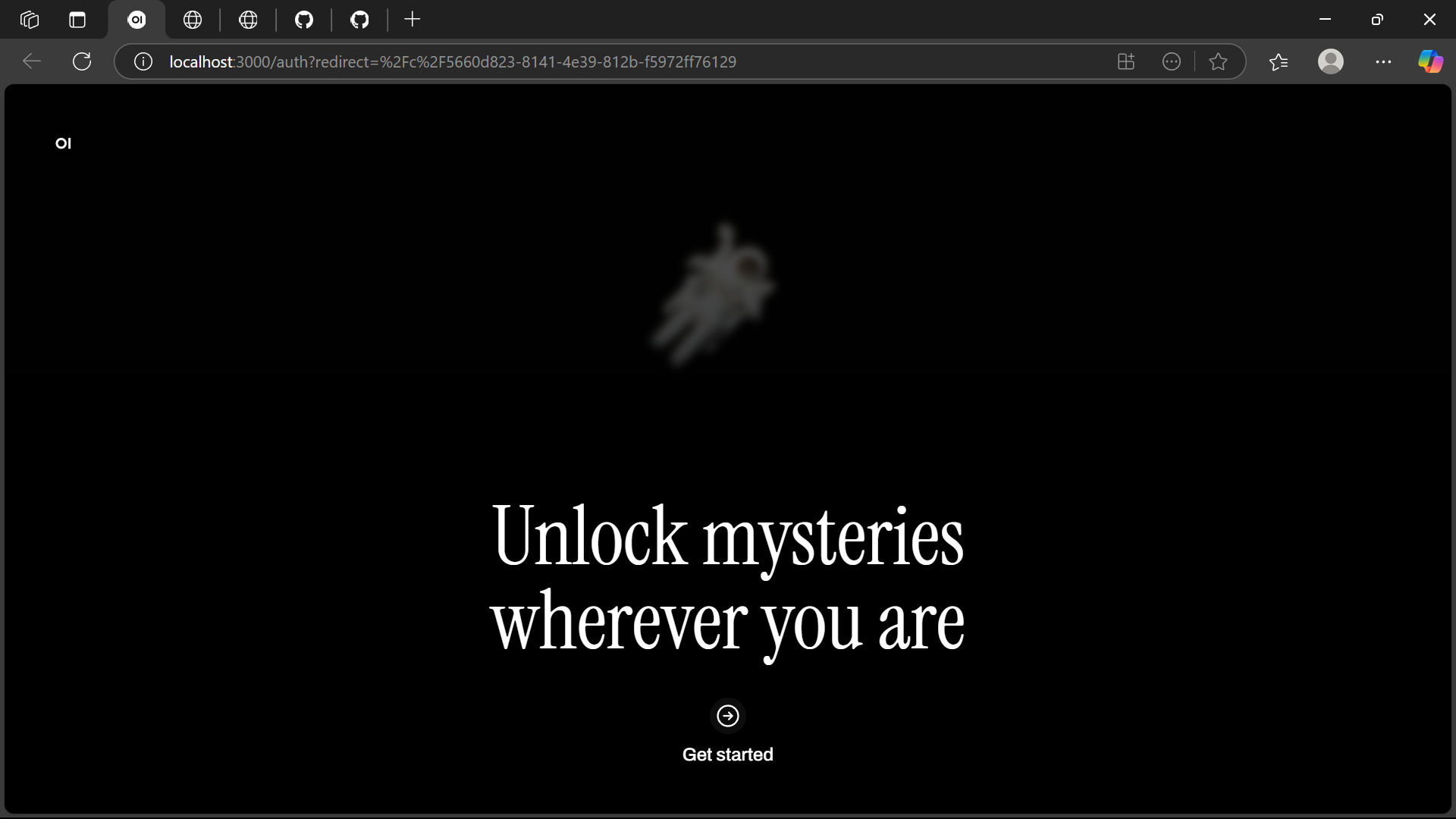

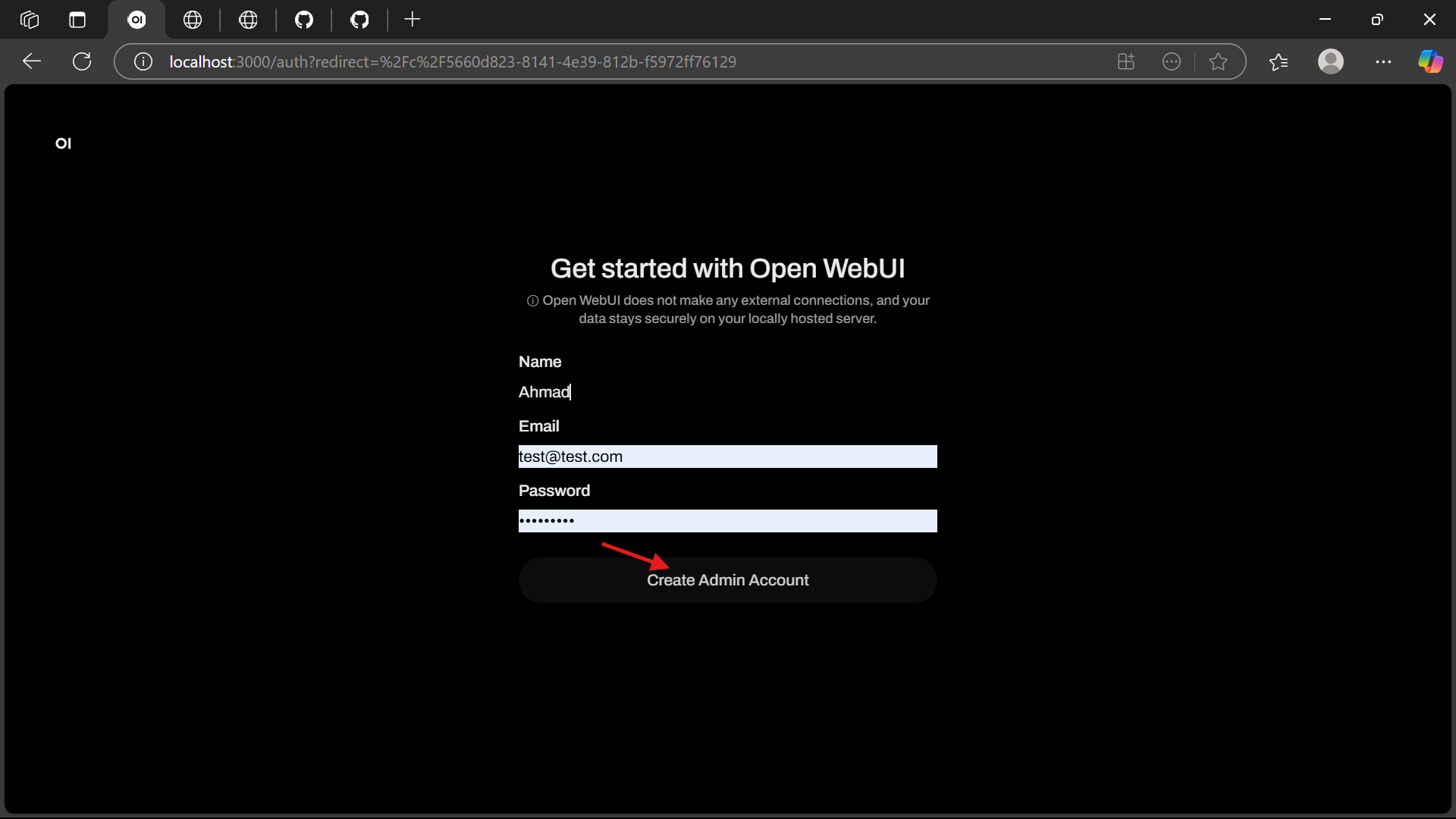

- Open http://localhost:3000 in your browser.

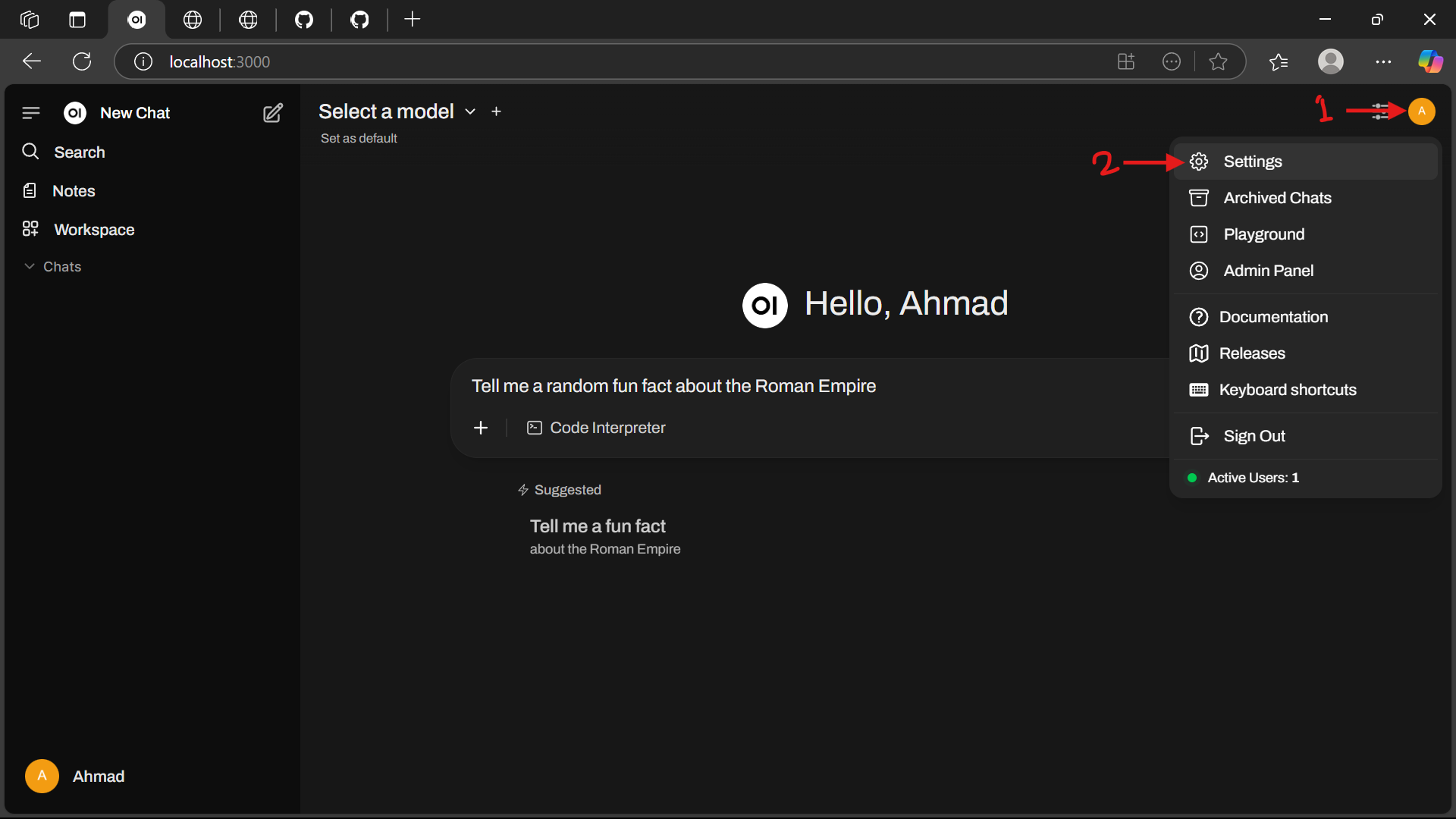

- Click the Profile Icon and navigate to Settings.

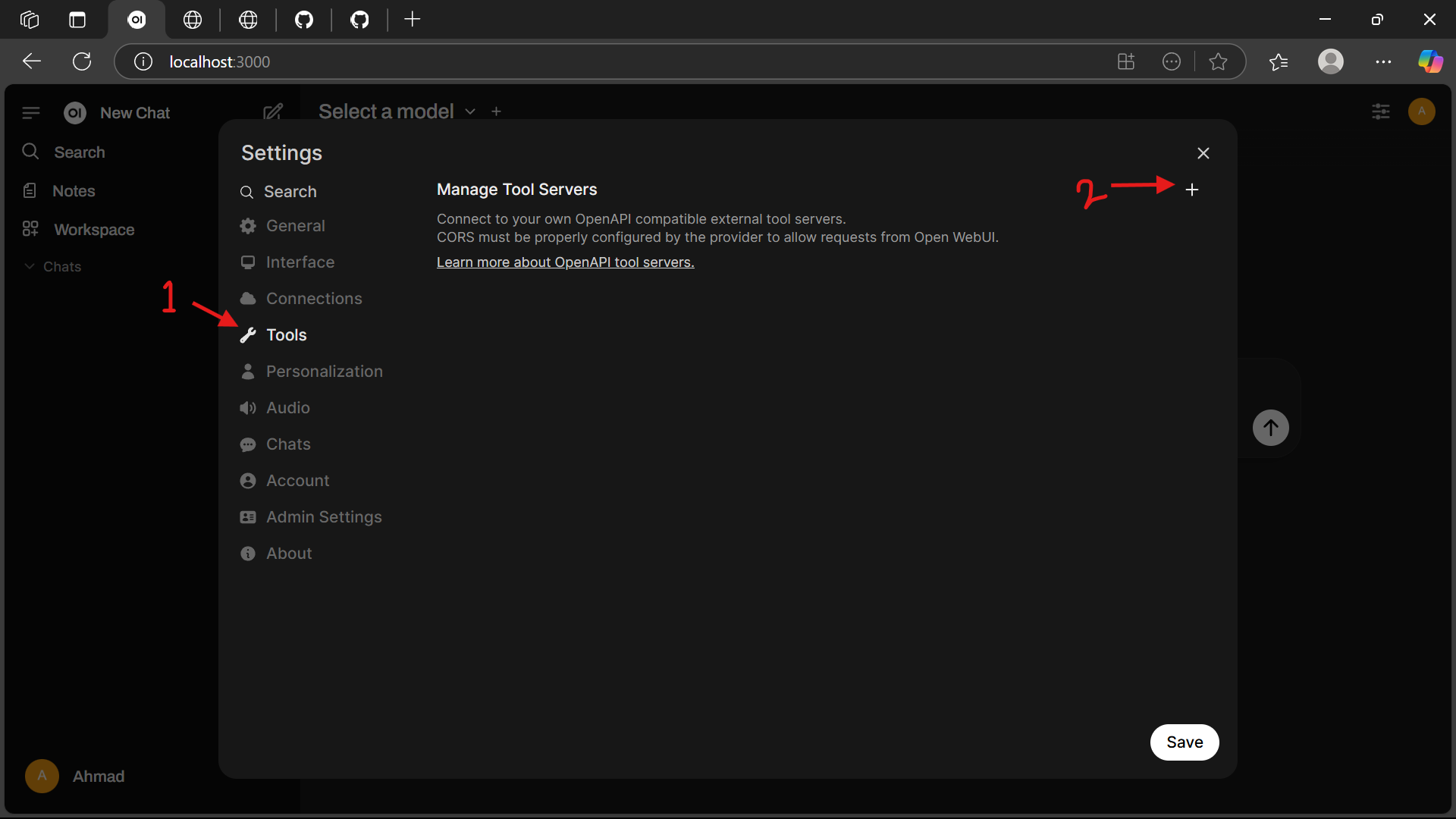

- Select the Tools menu and click the Add (+) Button.

- Add a new tool by entering the URL: http://localhost:8000/.

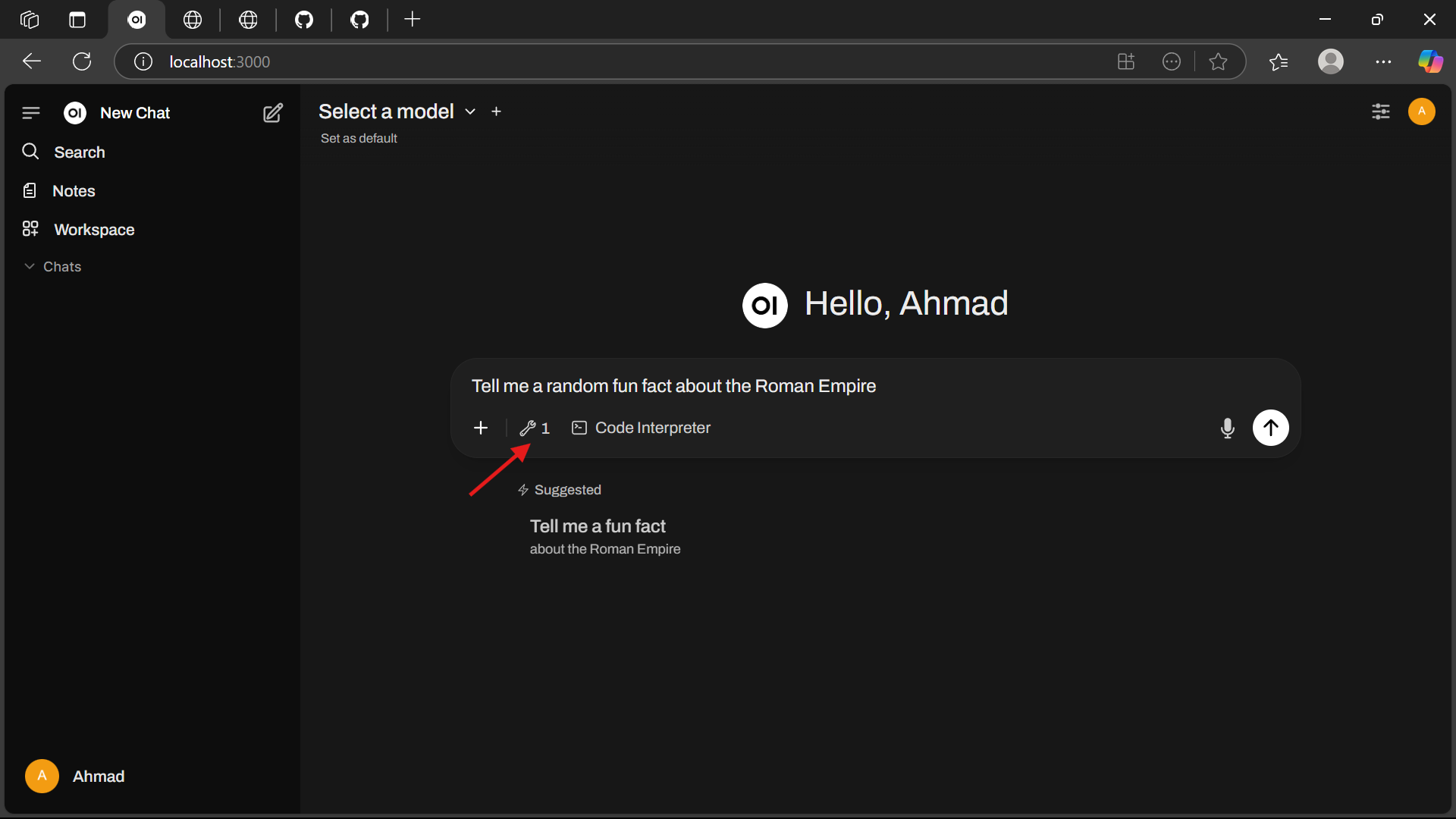

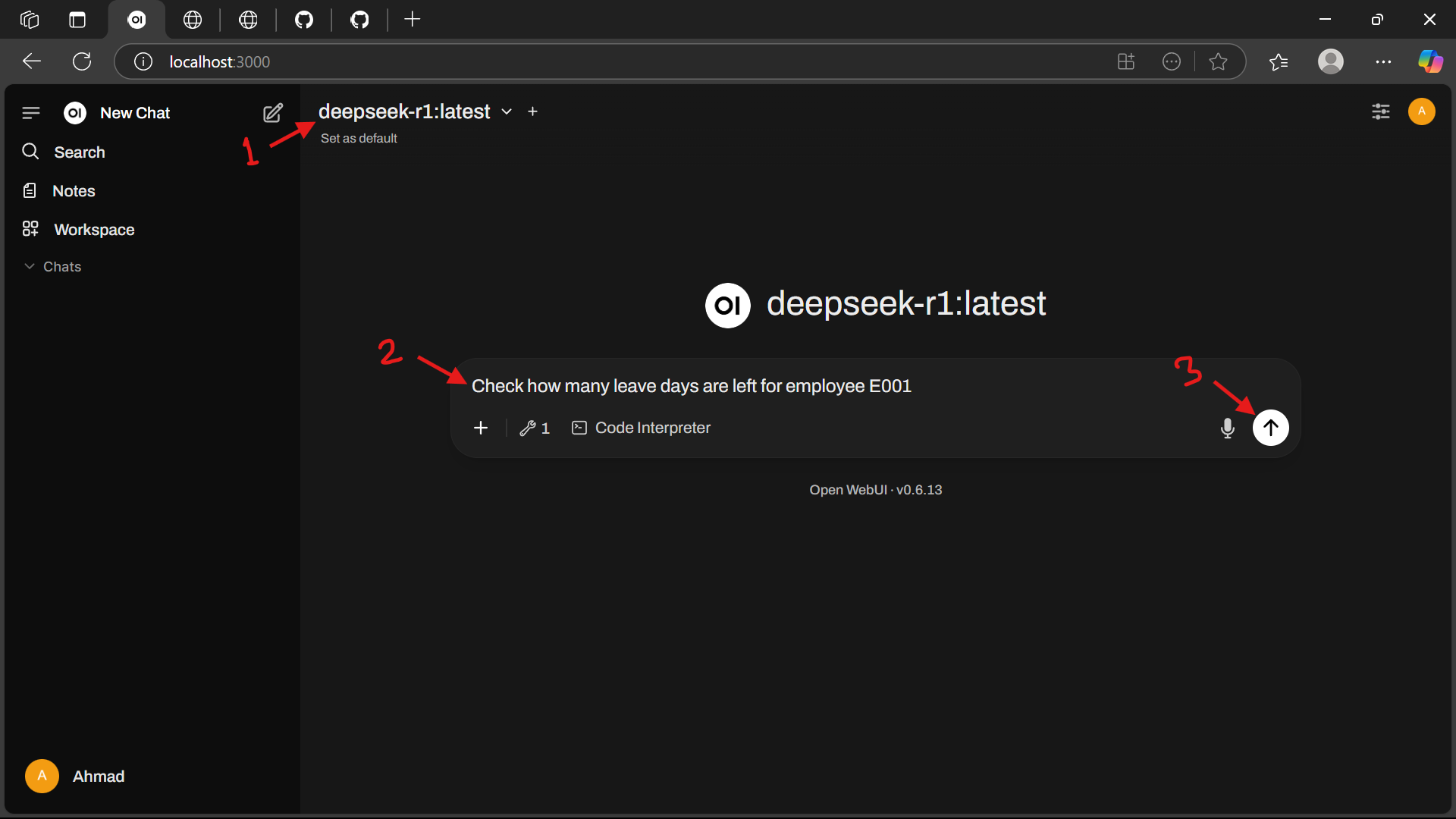

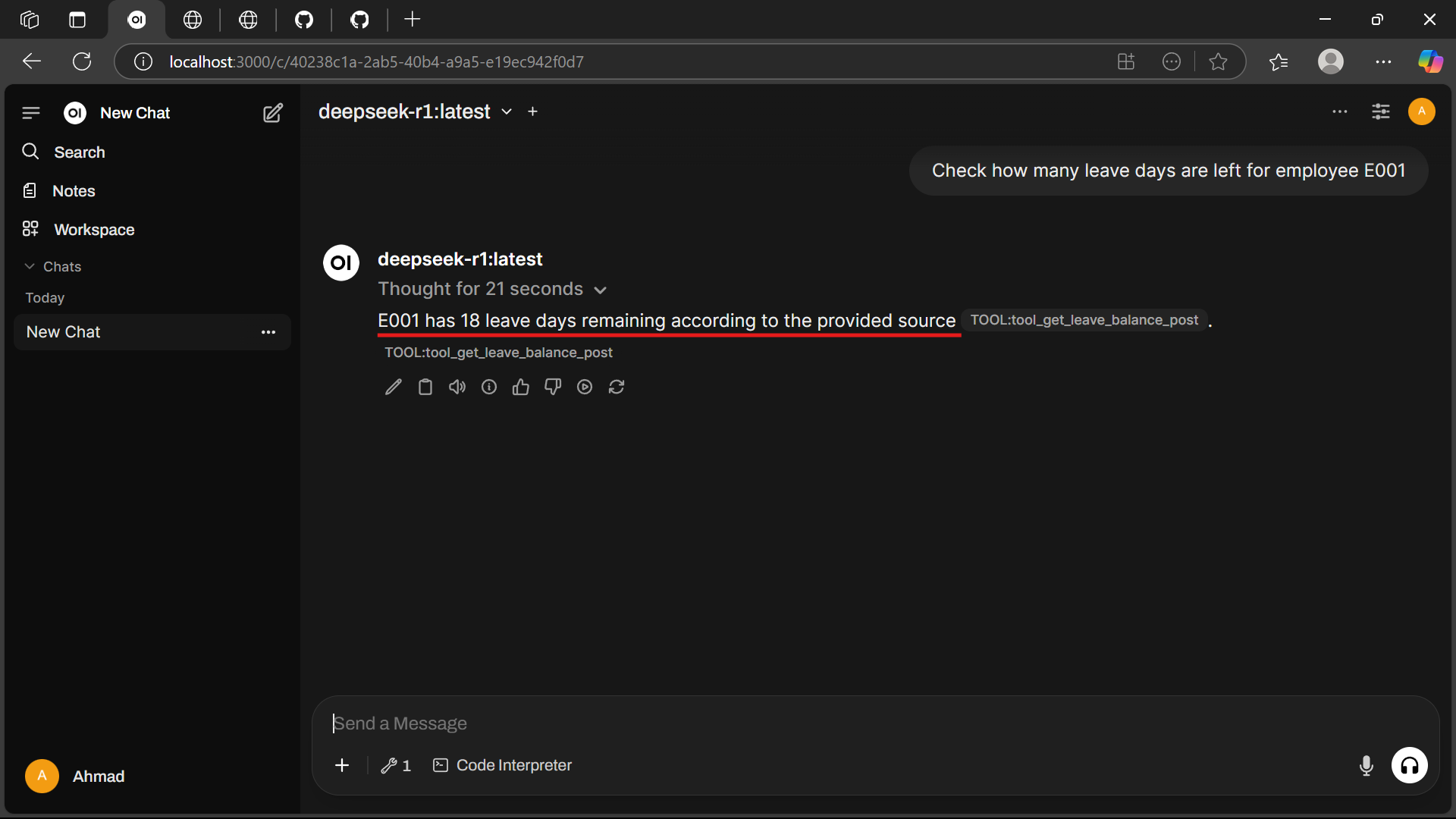

💬 Example Prompts

Use these prompts in Open WebUI to interact with the Leave Manager tool:

- Check Leave Balance:

Check how many leave days are left for employee E001

- Apply for Leave:

Apply  - View Leave History:

What's the leave history of E001?

- Personalized Greeting:

Greet me as Alice

🛠️ Troubleshooting

- Ollama not running: Ensure the service is active (

ollama serve) and check http://localhost:11434. - Docker issues: Verify Docker Desktop is running and you have sufficient disk space.

- Model not found: Confirm the

deepseek-r1model is listed withollama list. - Port conflicts: Ensure ports

11434,3000, and8000are free.

📚 Additional Resources

Dev Tools Supporting MCP

The following are the main code editors that support the Model Context Protocol. Click the link to visit the official website for more information.