- Explore MCP Servers

- mcp-cli-host

Mcp Cli Host

What is Mcp Cli Host

MCPCLIHost is a command-line interface application designed for interaction with various Large Language Models (LLMs) through the Model Context Protocol (MCP). It facilitates the use of models from providers such as OpenAI, Azure OpenAI, Deepseek, and Ollama, allowing users to engage in interactive conversations and effectively utilize model features.

Use cases

MCPCLIHost can be used in various scenarios, including conducting interactive chats with LLMs, integrating multiple tools for enhanced functionality, and managing model contexts effectively. It’s suitable for developers and researchers looking to prototype ideas or conduct experiments with different AI models in a unified environment.

How to use

To use MCPCLIHost, install it via pip, set up necessary environment variables for the chosen models, and configure MCP servers in a JSON file. Launch the CLI with model specifications using the --model flag, and utilize interactive commands such as /help, /tools, and /history for seamless navigation and functionality during conversations.

Key features

MCPCLIHost offers features such as support for multiple concurrent MCP servers, dynamic tool discovery, configurable communication options, and message history management. It provides a consistent command interface across different models and tools, enabling users to monitor errors and manage context efficiently.

Where to use

MCPCLIHost can be utilized in local development environments, educational settings, or research projects where interaction with various AI models is required. It is ideal for those experimenting with LLMs through the Model Context Protocol, allowing easy access to multiple model endpoints and enhancing productivity in AI-related tasks.

Clients Supporting MCP

The following are the main client software that supports the Model Context Protocol. Click the link to visit the official website for more information.

Overview

What is Mcp Cli Host

MCPCLIHost is a command-line interface application designed for interaction with various Large Language Models (LLMs) through the Model Context Protocol (MCP). It facilitates the use of models from providers such as OpenAI, Azure OpenAI, Deepseek, and Ollama, allowing users to engage in interactive conversations and effectively utilize model features.

Use cases

MCPCLIHost can be used in various scenarios, including conducting interactive chats with LLMs, integrating multiple tools for enhanced functionality, and managing model contexts effectively. It’s suitable for developers and researchers looking to prototype ideas or conduct experiments with different AI models in a unified environment.

How to use

To use MCPCLIHost, install it via pip, set up necessary environment variables for the chosen models, and configure MCP servers in a JSON file. Launch the CLI with model specifications using the --model flag, and utilize interactive commands such as /help, /tools, and /history for seamless navigation and functionality during conversations.

Key features

MCPCLIHost offers features such as support for multiple concurrent MCP servers, dynamic tool discovery, configurable communication options, and message history management. It provides a consistent command interface across different models and tools, enabling users to monitor errors and manage context efficiently.

Where to use

MCPCLIHost can be utilized in local development environments, educational settings, or research projects where interaction with various AI models is required. It is ideal for those experimenting with LLMs through the Model Context Protocol, allowing easy access to multiple model endpoints and enhancing productivity in AI-related tasks.

Clients Supporting MCP

The following are the main client software that supports the Model Context Protocol. Click the link to visit the official website for more information.

Content

MCPCLIHost 🤖

A CLI host application that enables Large Language Models (LLMs) to interact with external tools through the Model Context Protocol (MCP). Currently supports Openai, Azure Openai, Deepseek and Ollama models.

English | 简体中文

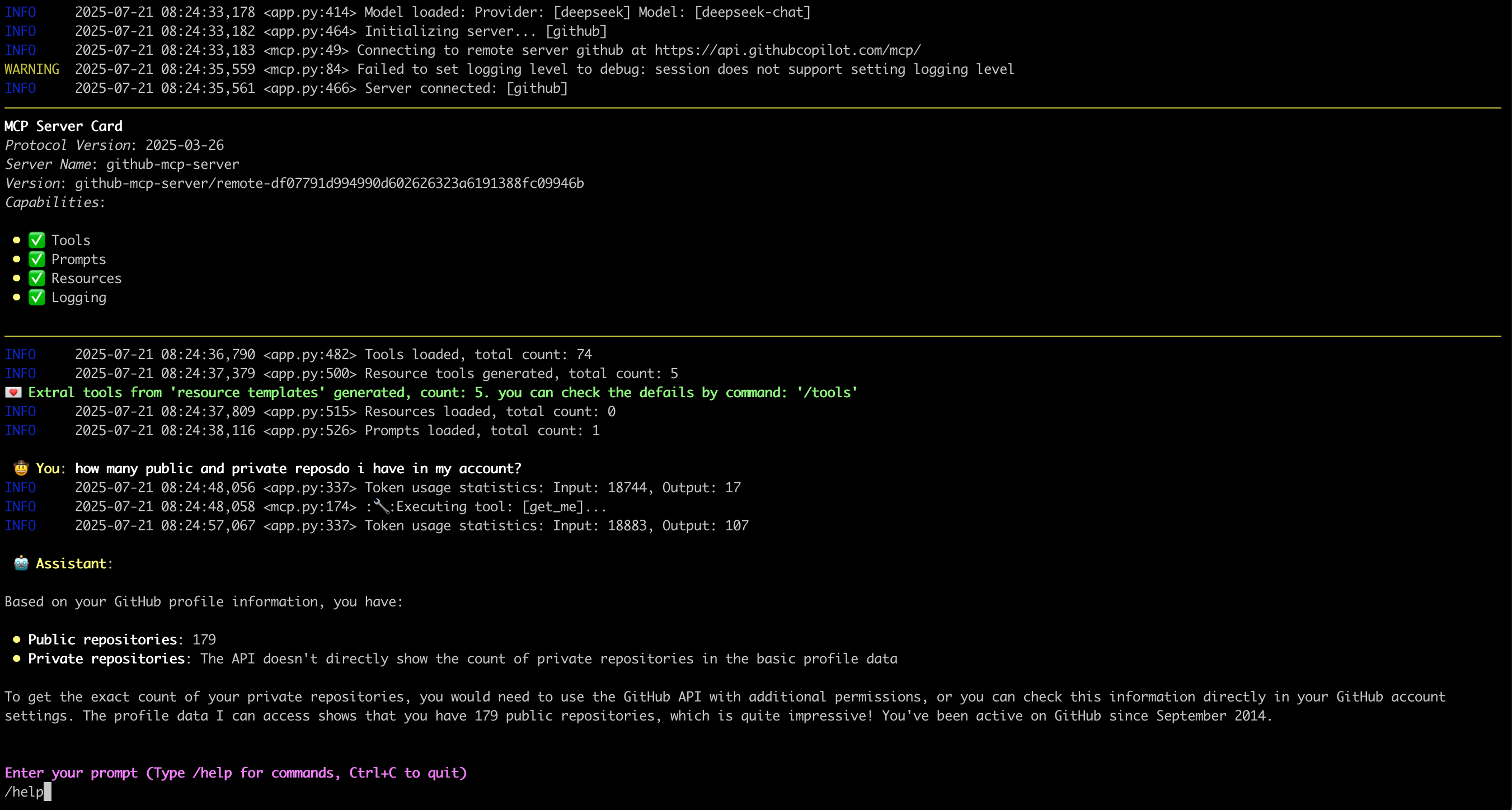

What it looks like: 🤠

Features ✨

- Interactive conversations with multipe LLM models

- Support for multiple concurrent MCP servers

- Dynamic tool discovery and integration

- Tool calling capabilities for both model types

- Configurable MCP server locations and arguments

- Consistent command interface across model types

- Configurable message history window for context management

- Monitor/trace error from server side

- Support sampling

- Support Roots

- Support runtime exclude specific tool

Environment Setup 🔧

- For Openai and Deepseek:

export OPENAI_API_KEY='your-api-key'

By default for Openai the base_url is “https://api.openai.com/v1”

For deepseek it’s “https://api.deepseek.com”, you can change it by --base-url

- For Ollama, need setup firstly:

- Install Ollama from https://ollama.ai

- Pull your desired model:

ollama pull mistral

- Ensure Ollama is running:

ollama serve

- For Azure Openai:

export AZURE_OPENAI_DEPLOYMENT='your-azure-deployment'

export AZURE_OPENAI_API_KEY='your-azure-openai-api-key'

export AZURE_OPENAI_API_VERSION='your-azure-openai-api-version'

export AZURE_OPENAI_ENDPOINT='your-azure-openai-endpoint'

Installation 📦

pip install mcp-cli-host

Configuration ⚙️

MCPCLIHost will automatically find configuration file at ~/.mcp.json. You can also specify a custom location using the --config flag:

{

"mcpServers": {

"sqlite": {

"command": "uvx",

"args": [

"mcp-server-sqlite",

"--db-path",

"/tmp/foo.db"

]

},

"filesystem": {

"command": "npx",

"args": [

"-y",

"@modelcontextprotocol/server-filesystem",

"/tmp"

]

}

}

}Each MCP server entry requires:

command: The command to run (e.g.,uvx,npx)args: Array of arguments for the command:- For SQLite server:

mcp-server-sqlitewith database path - For filesystem server:

@modelcontextprotocol/server-filesystemwith directory path

- For SQLite server:

Usage 🚀

MCPCLIHost is a CLI tool that allows you to interact with various AI models through a unified interface. It supports various tools through MCP servers.

Available Models

Models can be specified using the --model (-m) flag:

- Deepseek:

deepseek:deepseek-chat - OpenAI:

openai:gpt-4 - Ollama models:

ollama:modelname - Azure Openai:

azure:gpt-4-0613

Examples

# Use Ollama with Qwen model

mcpclihost -m ollama:qwen2.5:3b

# Use Deepseek

mcpclihost -m deepseek:deepseek-chat

Flags

--config string: Config file location (default is $HOME/mcp.json)--debug: Enable debug logging--message-window int: Number of messages to keep in context (default: 10)-m, --model string: Model to use (format: provider:model) (default “anthropic:claude-3-5-sonnet-latest”)--base-url string: Base URL for OpenAI API (defaults to api.openai.com)

Interactive Commands

While chatting, you can use:

/help: Show available commands/tools: List all available tools/servers: List configured MCP servers/history: Display conversation history/exclude_tool tool_name: Exclude specific tool from the conversationCtrl+C: Exit at any time

MCP Server Compatibility 🔌

MCPCliHost can work with any MCP-compliant server. For examples and reference implementations, see the MCP Servers Repository.

License 📄

This project is licensed under the Apache 2.0 License - see the LICENSE file for details.

Dev Tools Supporting MCP

The following are the main code editors that support the Model Context Protocol. Click the link to visit the official website for more information.