- Explore MCP Servers

- mcp-local-rag

Local RAG

What is Local RAG

mcp-local-rag is a locally running server designed to enhance language model queries by providing a web search capability. It operates without reliance on external APIs, enabling users to fetch real-time information from the web and integrate it into their language model responses.

Use cases

This tool is particularly useful for applications requiring up-to-date information retrieval, such as answering questions about recent events or retrieving specific content from the web. It enhances the capabilities of language models like Claude by allowing them to access recent web data.

How to use

Users can set up mcp-local-rag by configuring it in their MCP server configuration, either directly using the uv tool or via Docker. The setup involves adding a command to the MCP configuration that specifies how to run the mcp-local-rag server.

Key features

mcp-local-rag allows for real-time web searches using DuckDuckGo, retrieves data and embeddings, and computes similarity to rank results. It directly extracts relevant context from web pages, providing enriched responses to user queries without needing external APIs.

Where to use

The solution can be implemented in various applications supporting MCP clients, tested across platforms like Claude, Cursor, and Goose, making it suitable for any environment that requires enhanced language model interactions with fresh web data.

Clients Supporting MCP

The following are the main client software that supports the Model Context Protocol. Click the link to visit the official website for more information.

Overview

What is Local RAG

mcp-local-rag is a locally running server designed to enhance language model queries by providing a web search capability. It operates without reliance on external APIs, enabling users to fetch real-time information from the web and integrate it into their language model responses.

Use cases

This tool is particularly useful for applications requiring up-to-date information retrieval, such as answering questions about recent events or retrieving specific content from the web. It enhances the capabilities of language models like Claude by allowing them to access recent web data.

How to use

Users can set up mcp-local-rag by configuring it in their MCP server configuration, either directly using the uv tool or via Docker. The setup involves adding a command to the MCP configuration that specifies how to run the mcp-local-rag server.

Key features

mcp-local-rag allows for real-time web searches using DuckDuckGo, retrieves data and embeddings, and computes similarity to rank results. It directly extracts relevant context from web pages, providing enriched responses to user queries without needing external APIs.

Where to use

The solution can be implemented in various applications supporting MCP clients, tested across platforms like Claude, Cursor, and Goose, making it suitable for any environment that requires enhanced language model interactions with fresh web data.

Clients Supporting MCP

The following are the main client software that supports the Model Context Protocol. Click the link to visit the official website for more information.

Content

mcp-local-rag

“primitive” RAG-like web search model context protocol (MCP) server that runs locally. ✨ no APIs ✨

%%{init: {'theme': 'base'}}%% flowchart TD A[User] -->|1.Submits LLM Query| B[Language Model] B -->|2.Sends Query| C[mcp-local-rag Tool] subgraph mcp-local-rag Processing C -->|Search DuckDuckGo| D[Fetch 10 search results] D -->|Fetch Embeddings| E[Embeddings from Google's MediaPipe Text Embedder] E -->|Compute Similarity| F[Rank Entries Against Query] F -->|Select top k results| G[Context Extraction from URL] end G -->|Returns Markdown from HTML content| B B -->|3.Generated response with context| H[Final LLM Output] H -->|5.Present result to user| A classDef default stroke:#333,stroke-width:2px; classDef process stroke:#333,stroke-width:2px; classDef input stroke:#333,stroke-width:2px; classDef output stroke:#333,stroke-width:2px; class A input; class B,C process; class G output;

Installation

Locate your MCP config path here or check your MCP client settings.

Run Directly via uvx

This is the easiest and quickest method. You need to install uv for this to work.

Add this to your MCP server configuration:

{

"mcpServers": {

"mcp-local-rag": {

"command": "uvx",

"args": [

"--python=3.10",

"--from",

"git+https://github.com/nkapila6/mcp-local-rag",

"mcp-local-rag"

]

}

}

}Using Docker (recommended)

Ensure you have Docker installed.

Add this to your MCP server configuration:

{

"mcpServers": {

"mcp-local-rag": {

"command": "docker",

"args": [

"run",

"--rm",

"-i",

"--init",

"-e",

"DOCKER_CONTAINER=true",

"ghcr.io/nkapila6/mcp-local-rag:latest"

]

}

}

}Security audits

MseeP does security audits on every MCP server, you can see the security audit of this MCP server by clicking here.

MCP Clients

The MCP server should work with any MCP client that supports tool calling. Has been tested on the below clients.

- Claude Desktop

- Cursor

- Goose

- Others? You try!

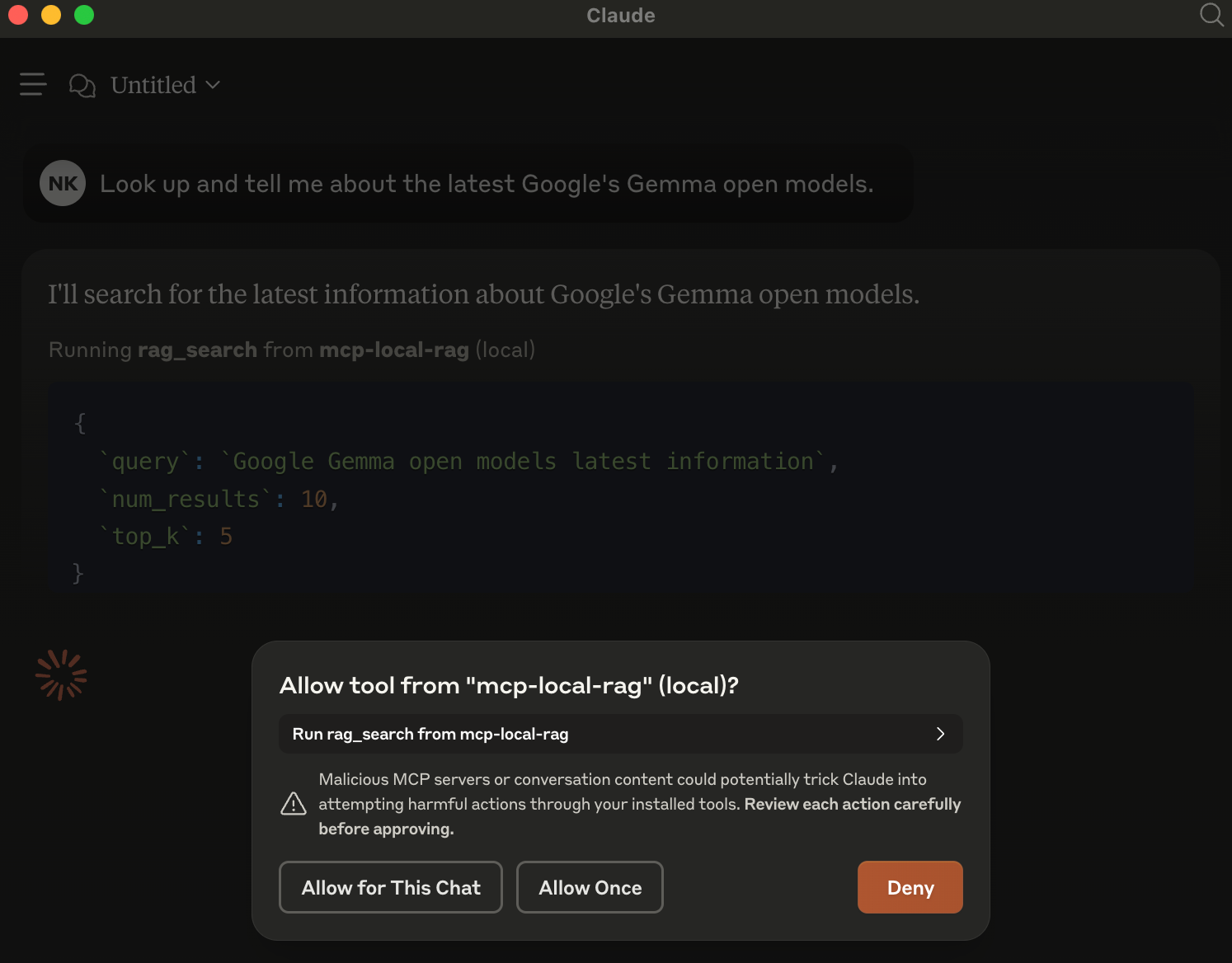

Examples on Claude Desktop

When an LLM (like Claude) is asked a question requiring recent web information, it will trigger mcp-local-rag.

When asked to fetch/lookup/search the web, the model prompts you to use MCP server for the chat.

In the example, have asked it about Google’s latest Gemma models released yesterday. This is new info that Claude is not aware about.

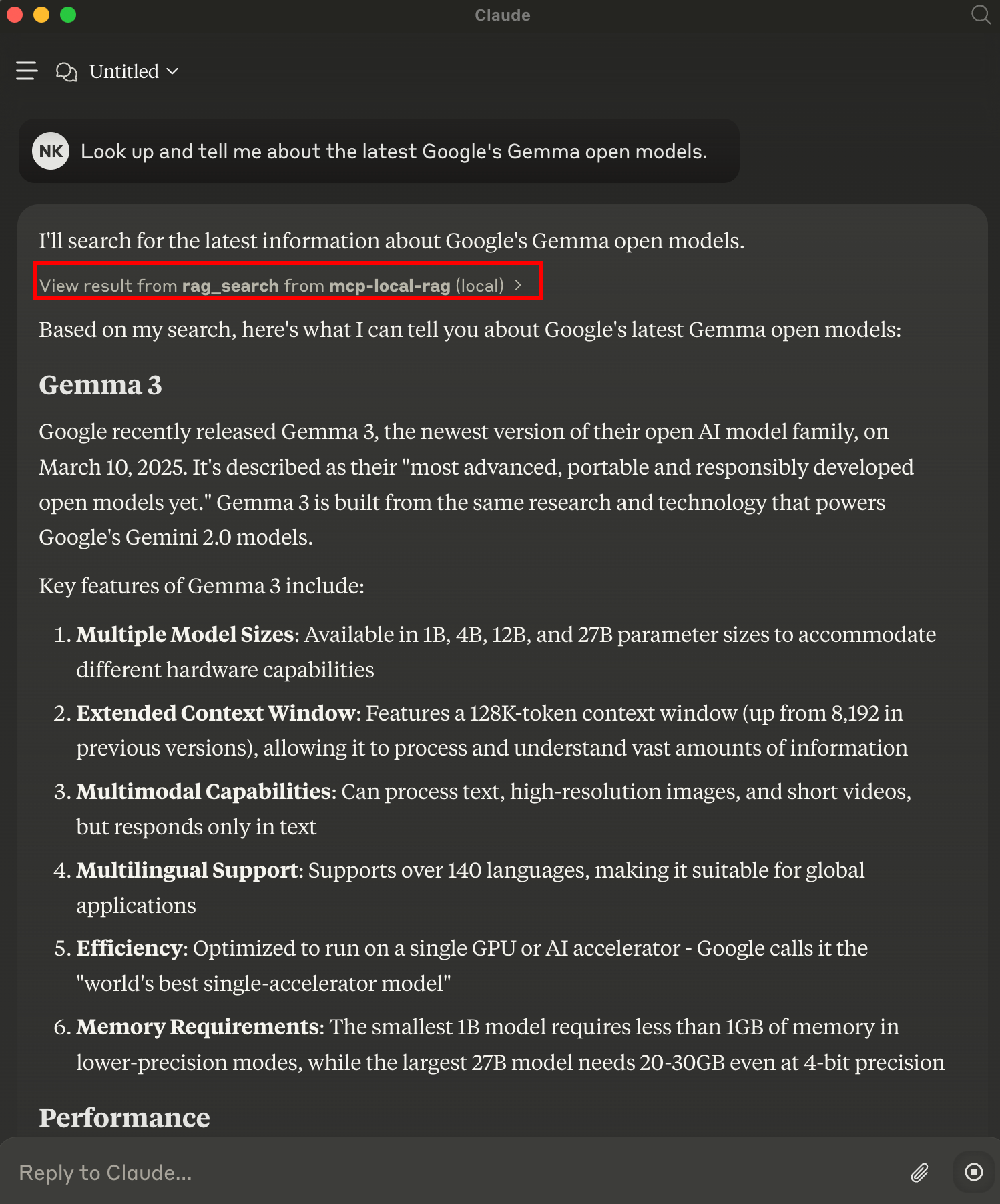

Result

mcp-local-rag performs a live web search, extracts context, and sends it back to the model—giving it fresh knowledge:

Contributing

Have ideas or want to improve this project? Issues and pull requests are welcome!

License

This project is licensed under the MIT License.

Dev Tools Supporting MCP

The following are the main code editors that support the Model Context Protocol. Click the link to visit the official website for more information.